Most people use a “Turnitin pre-check” for one of two reasons:

- They want to fix similarity issues (citations, quotations, accidental patchwriting) before the deadline.

- They want to avoid an AI-writing flag, especially when their writing is polished, formulaic, or AI-assisted.

The problem is that “pre-check” can mean several very different workflows. In some cases it genuinely reduces risk. In others it can create new risk, especially if your draft gets stored in a repository and then matches against itself later.

Below is a practical, 2026-ready breakdown of when Turnitin pre-checks help, when they can backfire, and how to do them safely.

What counts as a “Turnitin pre-check” (and why it matters)

People use the phrase loosely, but your risk changes a lot depending on which scenario you are in.

Scenario A: A draft submission inside your class Turnitin inbox

Many instructors enable multiple submissions before the due date. In these setups, you upload a draft, view the report, revise, then resubmit.

This is usually the lowest-risk form of “pre-check” because your instructor can see your drafting process, and the workflow is explicitly part of the assignment setup.

Scenario B: An institution-provided self-check (writing center or library)

Some schools provide a separate portal that lets students check drafts. Policies and storage settings vary.

This can be safe and useful, but only if you know:

- whether your draft is stored in a repository

- whether that repository is shared across courses

- whether the final submission will be checked against the stored draft

Scenario C: A third-party “Turnitin check” service

These are the sites or sellers that claim they can run Turnitin for you. You send them your draft, they send you screenshots.

This is the highest-risk scenario because you do not control storage, privacy, or whether your text is reused elsewhere.

Scenario D: “I’ll upload it to a friend’s class”

This is functionally the same as Scenario C from a risk perspective. It can also raise academic-integrity questions, even if your intent is only to verify similarity.

Do Turnitin pre-checks reduce AI-flag risk or raise it?

The short reality: a pre-check itself does not “tag” your writing as AI

Turnitin’s AI-writing indicator is based on linguistic patterns and model judgments, not on a simple counter like “number of uploads.” Uploading a document once versus twice does not automatically make the AI indicator go up.

However, pre-checks can still increase the chance of scrutiny in two indirect ways:

- Your similarity report can change dramatically because your earlier upload is now a matching source.

- Your workflow can look suspicious if your submission history is inconsistent (especially if drafts are uploaded in odd places or through third parties).

So the more accurate question is:

Do Turnitin pre-checks reduce risk or raise it in your specific submission setup?

The bigger risk is usually Similarity, not the AI indicator

When people get burned by pre-checks, it is often because of self-matching.

If a draft is stored in a repository, then your final submission may match your earlier draft. This can produce:

- a higher similarity percentage

- large highlighted blocks that look like copying

- “paper to paper” matches that an instructor notices even if the wording is your own

Whether this happens depends on settings controlled by the institution (and sometimes by the instructor).

Why this can feel unfair

From a student perspective, matching your own earlier draft looks pointless. From Turnitin’s perspective, it is doing what it is designed to do: compare a submission against available sources.

This is why “pre-check” safety is less about AI and more about where your text gets stored.

| Pre-check method | Main benefit | Main risk | Risk level (typical) |

|---|---|---|---|

| Draft submissions in your own course inbox | Transparent drafting, quick revision loop | Similarity can fluctuate between resubmissions | Low |

| Institution self-check portal | Useful practice and coaching | Storage settings may cause self-match later | Medium |

| Friend’s class upload | Fast access | Self-match plus policy issues | High |

| Third-party “Turnitin report” seller | Convenience | Storage, privacy, resale of your text, unpredictable self-matches | Very high |

When Turnitin pre-checks genuinely reduce risk

A pre-check is most helpful when it is used to improve academic practice, not to chase a specific percentage.

1) You catch citation and quotation problems early

Similarity spikes often come from fixable issues:

- missing quotation marks

- citations formatted incorrectly

- bibliography problems

- copied definitions or background sections that should be paraphrased with sources

Fixing these before submission reduces the chance your instructor interprets the report as carelessness.

2) You spot “template writing” that looks machine-like

Even human writing can trigger AI suspicion if it is overly uniform.

Common examples include:

- paragraphs with identical structure

- repetitive transitions (“Additionally, Furthermore, Moreover”)

- sentences that are all similar length

- overly neutral tone with no assignment-specific detail

If you want a deeper explanation of why normal writing can get flagged, see our guide on normal writing habits that can trigger Turnitin AI flags.

3) You learn what your instructor will actually see

Many students assume Turnitin returns a single “truth score.” In practice, instructors interpret a report in context.

A draft check can help you:

- understand what gets highlighted

- prepare to explain legitimate matches

- tighten your citations and research trail

When pre-checks can increase risk (and why)

1) Your draft gets stored and becomes a source against you

This is the classic pre-check backfire.

If your earlier draft is stored, your final version can match it. Even if the match is “you,” it can:

- inflate similarity

- look like duplication

- trigger extra review

If you are unsure about whether your submissions are being stored, ask your instructor or writing center directly. It is a normal question, and it prevents avoidable problems.

2) You use untrusted “Turnitin access” services

Beyond academic policy issues, there are practical risks:

- you do not know where your file is saved

- you do not know whether it is uploaded into a database

- you cannot confirm the screenshots are authentic

- your text could be reused (which can create new similarity matches later)

If your goal is to reduce false alarms and improve writing quality, it is safer to use transparent tools and keep your own drafting evidence.

3) You optimize for the score instead of authorship proof

A risky pattern is repeatedly rewriting until the indicator changes, without improving the underlying work.

In an academic integrity conversation, the strongest defense is usually:

- drafts and outlines

- version history

- source notes and reading trail

- the ability to explain choices and argumentation

If you want to build that defense, version history helps, but it is not magic. This article breaks down what it can and cannot prove: Is Google Docs or Word version history enough as proof?

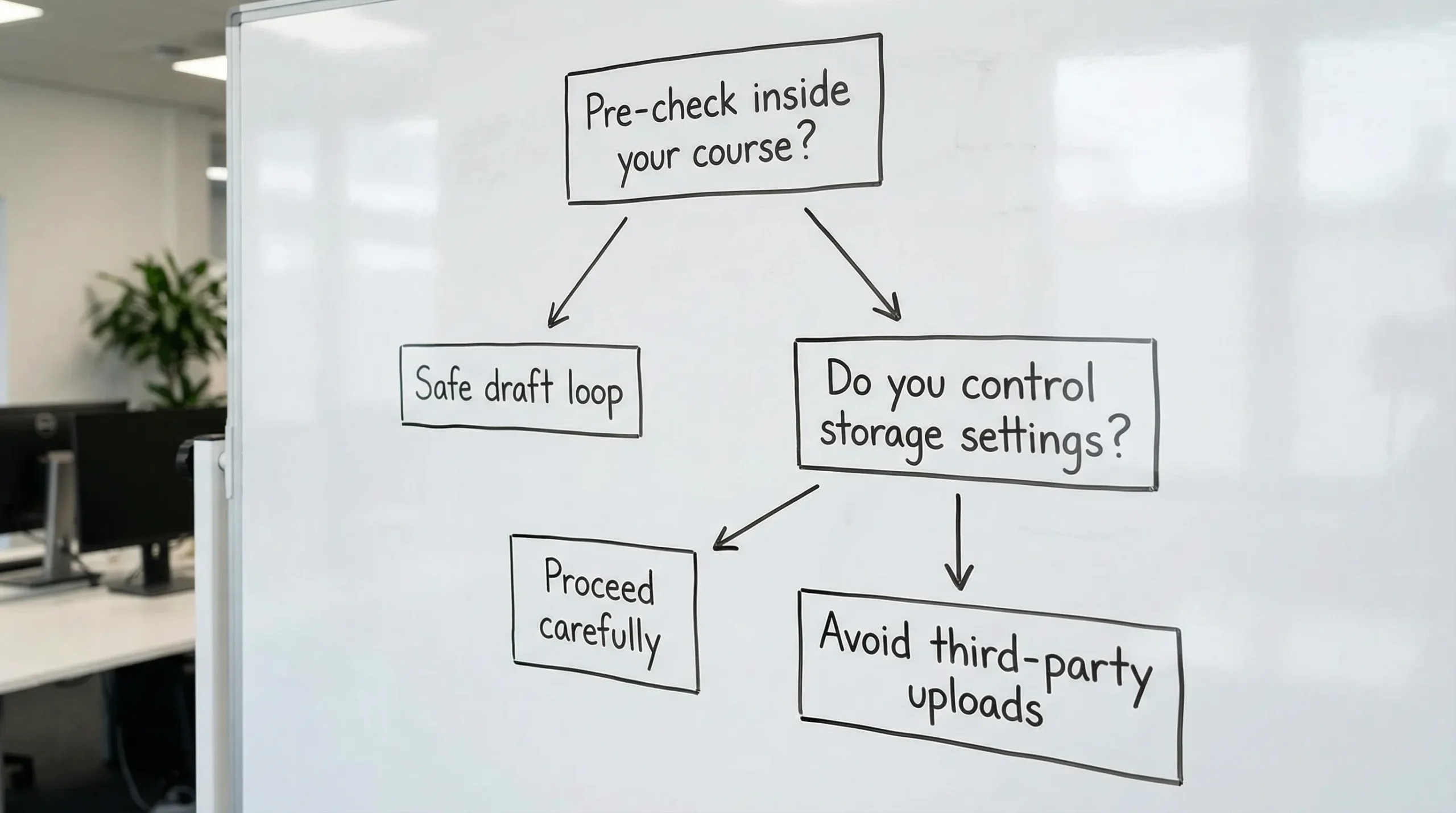

A safer decision framework (use this before you pre-check)

Use this checklist to decide whether a Turnitin pre-check is likely to help or hurt.

Step 1: Are you pre-checking inside your own assignment?

If yes, it is usually safe.

If no, go to Step 2.

Step 2: Do you control storage settings (or can you confirm them)?

If you cannot confirm storage, treat it as medium to high risk.

Step 3: Is your goal quality control or “beating” the detector?

Quality control tends to reduce risk. Score-chasing tends to increase risk because it produces unnatural edits and leaves you with less defensible process evidence.

What to do instead of risky pre-checks (practical, policy-safe)

If you are worried about AI flags, your best move is usually to make your work more defensible, not merely “less detectable.”

Strengthen your authorship signals

Do these before you submit:

- Add assignment-specific details (course concepts, local examples, your dataset, your case study)

- Include a short reflection paragraph that only you could write (what surprised you, what changed your mind)

- Vary sentence rhythm (mix short and long sentences naturally)

- Reduce “generic filler” (broad claims with no evidence)

Keep an authorship packet

If a flag happens, evidence beats arguments. Save:

- your outline and research notes

- early drafts (even messy ones)

- screenshots or exports of version history

- a 5 to 10 bullet “process memo” describing how you wrote it

If you are already in trouble, this guide is designed for the first day after an accusation: Accused of AI use: what to do in the next 24 hours.

Use tools responsibly (and don’t confuse “human-like” with “honest”)

There are legitimate reasons to rewrite text that triggers false positives (especially for ESL writers, or for writing that has been heavily grammar-polished). The key is to stay within your institution’s policy.

Detection Drama focuses on analysis and rewriting that aims to make writing read naturally, paired with reports and guides so you can understand why a passage looks suspicious in the first place.

If you are an educator or organization trying to adopt AI tools responsibly (policy, training, workflow audits), a specialist partner can be more effective than ad hoc decisions. For that angle, see AI audits and training from Impulse Lab.

So, do Turnitin pre-checks reduce risk or raise it?

They reduce risk when:

- the pre-check is built into your course workflow

- you understand storage and resubmission rules

- you use the report to improve citations, specificity, and clarity

They raise risk when:

- your draft gets stored somewhere you cannot control

- you rely on third-party “Turnitin report” services

- you keep rewriting purely to manipulate an indicator

If you remember one rule: Never upload your draft to a place you do not trust or control.

Frequently Asked Questions

Do multiple Turnitin submissions increase the AI score? Not automatically. The AI indicator is based on text features, not how many times you uploaded. Scores can still change if your text changes, formatting changes, or Turnitin updates models.

Can a pre-check cause self-plagiarism in the similarity report? Yes, if your earlier draft is stored and then used as a matching source for your final submission. This depends on institutional and instructor settings.

Is it safe to use a friend’s Turnitin class to pre-check? It is risky. Your draft may be stored, it can create self-matches later, and it may violate course or institutional rules.

Why does my similarity percentage change between submissions? Similarity is sensitive to settings (filters, excluded sources), repository timing, and what sources are available at the time of the scan.

If I get flagged for AI, should I rewrite until the percentage drops? Usually no. It is safer to preserve evidence, improve clarity and specificity, and be ready to explain your process. Chasing a number can create awkward text and reduce defensibility.

What is the safest way to “pre-check” if I am worried? Use your course’s official draft submission option if available, keep drafts and version history, and focus on improving citations and assignment-specific content.

Try a safer workflow with Detection Drama

If you need a low-friction way to analyze why your text looks AI-like, Detection Drama provides free guides, instant tools (no email required), and authenticity-style checks designed to help you revise responsibly.

Explore the site’s resources and try the free humanizer tool at Detection Drama to refine drafts, reduce false-positive triggers, and submit work that is easier to defend.