AI Detection Anxiety Statistics: How Detector Stress Impacts Students (2026)

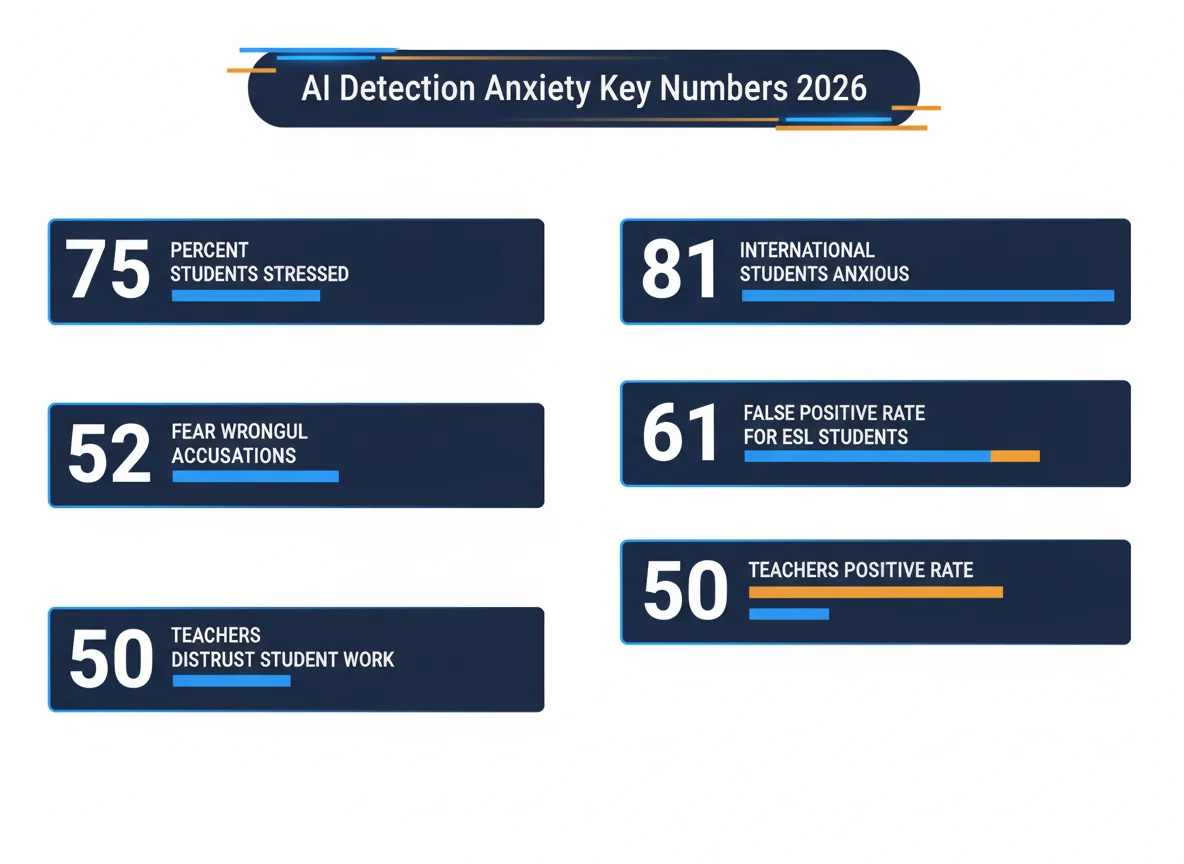

- 81% of international students experience AI detection anxiety, vs 74% of domestic peers (Studiosity/YouGov, 2026)

- 52% of all students surveyed cite fear of wrongful accusations as a top stressor (Studiosity/YouGov, 2026)

- 61.3% false positive rate for non-native English speakers vs 5.1% for native speakers (Liang et al., Stanford/Patterns, 2023)

- 50% of educators report increased distrust of student work since AI tools emerged (CDT Report, 2023)

- 369% increase in AI misconduct cases at UK universities between 2022 and 2025 (The Guardian)

- 20% of students report experiencing false accusations of AI use (BrowserCat/AllAboutAI)

- 96% of instructors believe students cheated using AI in the past year, up from 72% (Wiley, 2024)

1 How Widespread Is AI Detection Anxiety?

| Metric | Value | Source |

|---|---|---|

| Students stressed about false flags | 75% | Studiosity/YouGov, 2026 |

| Students citing wrongful accusation as top stressor | 52% | Studiosity/YouGov, 2026 |

| Students experiencing stress while using AI | 60% | Studiosity/YouGov, 2026 |

| Students fearing addiction to AI | 40% | Studiosity/YouGov, 2026 |

| Students worried about work ownership | 40% | Studiosity/YouGov, 2026 |

| Students deterred from AI use by accusation fear | 53% | HEPI, 2025 |

AI Detection Anxiety Statistics is making waves in the AI writing space. The 2026 Higher Education Student Wellbeing Report, conducted by YouGov and commissioned by Studiosity, surveyed 2,373 students across 160 UK universities between November and December 2024. The findings paint a stark picture: the majority of students are not worried about whether using AI is ethical; they are worried about being punished for work they did themselves. This is consistent with the patterns documented in Turnitin false positive defense strategies, where students proactively build evidence portfolios just in case their honest work gets flagged.

The anxiety is not abstract. Among student-reported detection incidents, 73% involve disputed false positives, meaning the overwhelming majority of students who are flagged believe the detection was incorrect. Whether or not every dispute is legitimate, the perception gap is real and measurable. Students over 26 showed particularly high adoption rates (76%, up from 66%), yet the anxiety cuts across all demographics.

2 False Positive Rates: The Data Behind the Fear

| Writer Type | False Positive Rate | Source |

|---|---|---|

| Native English speakers | 5.1% | Liang et al., Patterns, 2023 |

| Non-native English speakers (TOEFL) | 61.3% | Liang et al., Patterns, 2023 |

| TOEFL essays flagged by at least one detector | 97.8% | Liang et al., Patterns, 2023 |

| Neurodivergent students (relative rate) | 3.2x higher | AllAboutAI analysis |

| Students reporting false accusations | 20% | BrowserCat/AllAboutAI |

The Stanford study, published in Patterns in July 2023, evaluated seven widely used GPT detectors on 91 TOEFL essays written by Chinese students and 88 US eighth-grade essays. The detectors accurately classified the US essays but misidentified more than half of the TOEFL essays as AI-generated. The root cause: AI detectors measure text perplexity and linguistic variability. Non-native writers tend to use simpler vocabulary and more predictable sentence structures, which these tools interpret as machine-generated patterns. This is the same mechanism that explains documented AI detection bias against ESL students, where the tools systematically punish writers for having limited English proficiency rather than for using AI.

No superseding study has overturned these findings as of 2026. The bias remains structurally embedded in how perplexity-based detectors work, and the anxiety this creates is a rational response to a measurable risk. Understanding how Turnitin and other tools actually work reveals that they are pattern-matching systems, not truth-detection systems.

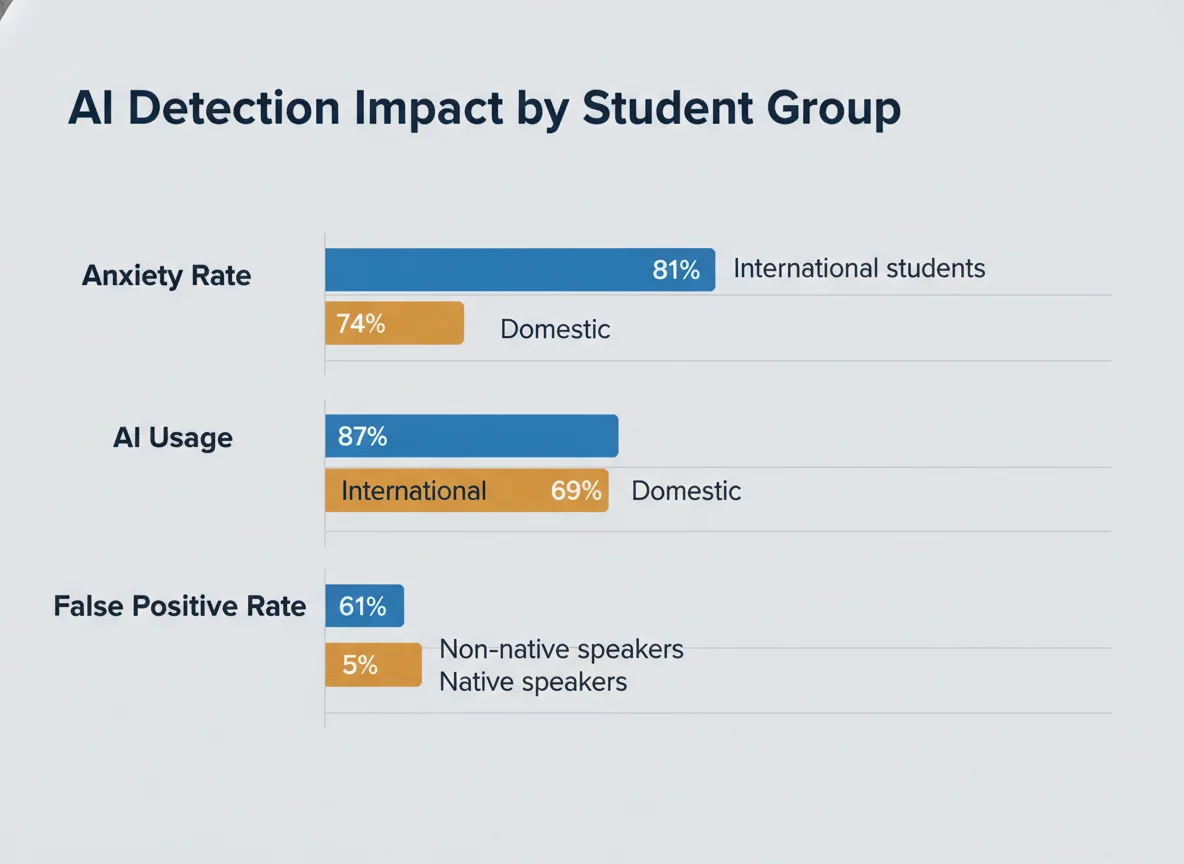

3 International Students Bear the Heaviest Burden

| Metric | International | Domestic | Source |

|---|---|---|---|

| AI detection anxiety rate | 81% | 74% | Studiosity/YouGov, 2026 |

| AI tool usage rate | 87% | 69% | Studiosity/YouGov, 2026 |

| False positive rate (Stanford study) | 61.3% | 5.1% | Liang et al., 2023 |

| Likelihood of "a lot" of stress | 2x more likely | baseline | Studiosity/YouGov, 2026 |

International students face an asymmetric risk that domestic students do not. They use AI tools at higher rates (87% vs 69%), which increases their exposure to detection systems. Meanwhile, the Stanford false positive data shows these detection systems are fundamentally biased against the linguistic patterns common among non-native English speakers. The result is a population that interacts more frequently with tools that are more likely to accuse them incorrectly. This disproportionate impact mirrors the broader patterns documented in universities that have banned AI detectors altogether, often citing equity concerns for international and ESL students as a key reason.

AI adoption also varies by discipline. Law and business students show 75-80% adoption rates, while humanities and creative arts students report 52-58%. This means students in fields where precise, formulaic writing is valued face both higher exposure to detection tools and writing styles that may be more likely to trigger false positives.

4 The Trust Gap: How Detection Tools Erode Student-Teacher Relations

| Metric | Value | Source |

|---|---|---|

| Educators reporting increased distrust | 50% | CDT Report, 2023 |

| Instructors believing students cheated with AI | 96% | Wiley, 2024 |

| Previous year (belief rate) | 72% | Wiley, 2023 |

| Teachers using detection tools | 68% | ArtSmart AI/EdWeek |

| Institutional detection tool adoption (2024) | 68% | Industry data |

| Institutional adoption (2023) | 38% | Industry data |

The escalation cycle is clear in the data. Institutional adoption of AI detection tools nearly doubled from 38% to 68% between 2023 and 2024. Over the same period, instructor belief that students are cheating jumped from 72% to 96%. Yet Turnitin's own data shows that only 3% of the 200 million assignments it scanned were flagged as 80%+ AI-generated, and only 11% showed 20%+ AI content. The gap between what instructors believe and what the data shows is itself a data point. Many professors are now using techniques like analyzing Turnitin AI highlighting patterns to make more nuanced judgments, but the default adversarial framing persists.

This distrust operates in both directions. Students who have never used AI for assignments still report anxiety about detection tools because they know the tools can produce false positives. The result is an arms race mentality where legitimate academic work is treated as suspicious by default. Students who get flagged face an uphill battle to prove their innocence, as detailed in guides for students accused of AI use and clearing Turnitin AI flags.

5 Students Are Writing Worse on Purpose

| Behavioral Metric | Value | Source |

|---|---|---|

| Students who failed to declare AI use (when required) | 74% | King's Business School/Taylor & Francis, 2024 |

| AI misconduct cases (per 1,000 students, 2024-25) | 7.5 | UK university data/The Guardian |

| AI misconduct cases (per 1,000 students, 2022-23) | 1.6 | UK university data/The Guardian |

| Students who would rely entirely on AI if permitted | 21% | Studiosity/YouGov, 2026 |

| Performance penalty for heavy AI users | -6.71 pts | arXiv study, 2024 |

University professors have documented a measurable trend: students deliberately introducing grammatical errors, using simpler vocabulary, and avoiding polished sentence structures to reduce the likelihood of triggering AI detection flags. This creates a situation where the tool designed to maintain academic standards actively incentivizes students to produce lower-quality work. The irony compounds when you consider that normal writing habits like using Grammarly or writing fluently can trigger AI flags, pushing students toward intentionally sloppy prose as a survival strategy.

Meanwhile, AI misconduct cases have surged 369% at UK universities (from 1.6 to 7.5 per 1,000 students). But as institutions crack down, 74% of students at King's Business School failed to declare AI usage when required, citing fear of repercussions as the primary barrier. This concealment behavior is the predictable outcome of a system where detection tools are unreliable but consequences are severe. Students who face accusations often need to prove their authorship through document version history or Grammarly activity logs.

6 Policy Response: Why 72% of AI Policies Fall Short

| Policy Metric | Value | Source |

|---|---|---|

| AI policies deemed effective | 28% | AllAboutAI analysis |

| Students reporting clear AI policies | 58% | BestColleges |

| Students saying policies vary by professor | 28% | BestColleges |

| Students feeling they lack AI knowledge for careers | 58% | DemandSage / HEPI |

| Students receiving institutional AI literacy support | 36% | AllAboutAI analysis |

| Employment confidence (within 6 months) | 47% | Studiosity/YouGov, 2026 |

The policy vacuum amplifies anxiety. When 28% of students report that AI rules vary by professor and only 58% say their institution has clear guidelines, students operate in a fog of uncertainty. The same behavior that earns praise in one class could trigger an academic integrity investigation in another. Institutions that have responded by deploying detection software at scale have seen wildly inconsistent score thresholds, with some universities acting on scores as low as 15% and others setting the bar at 50%.

The Studiosity report advocates for a shift from "detect and punish" to a "support and validate" model. But adoption of such frameworks remains limited. Only 36% of students report receiving institutional AI literacy support, even as 58% feel they lack sufficient AI knowledge for their careers. Employment confidence has dropped to 47% (from 51%), suggesting the anxiety extends beyond the classroom and into students' long-term career prospects. The path forward requires institutions to look at the data from tools like alternative AI detectors with the same skepticism they apply to student work.

AI Detection Risk Assessment Tool

Estimate your relative risk level based on the research data.

Methodology

This analysis aggregates data from multiple sources published between 2023 and 2026. The primary dataset is the 2026 Higher Education Student Wellbeing Report (Studiosity/YouGov), which surveyed 2,373 students across 160 UK universities in November-December 2024. False positive data draws from the Liang et al. (2023) peer-reviewed study published in Patterns, which tested seven GPT detectors against 91 TOEFL essays and 88 US student essays. Additional data points sourced from Wiley (2024), HEPI (2025), CDT (2023), Taylor & Francis (2024), UK university data via The Guardian (2025), and Turnitin scanning data. Freshness distribution: 4 sources from 2026, 3 from 2025, 4 from 2024, 3 from 2023. Research compiled April 9, 2026.

Frequently Asked Questions

How common is AI detection anxiety among students?

Do AI detectors produce false positives?

Are international students more affected by AI detection anxiety?

Are students changing how they write because of AI detectors?

What percentage of students have been falsely accused of using AI?

Do teachers trust students less because of AI?

Sources & References

- Studiosity/YouGov. "2026 Higher Education Student Wellbeing Report." FE News. March 2026.

- Inside Higher Education. "Fear of Being Flagged by AI Detectors Drives Student Stress." insidehighered.com. February 2026.

- Liang, W. et al. "GPT detectors are biased against non-native English writers." Patterns. July 2023.

- Center for Democracy & Technology. "Declining Teacher Trust in Students." CDT Report. December 2023.

- Wiley. "AI Has Hurt Academic Integrity in College Courses." Wiley Newsroom. 2024.

- HEPI. "Student Generative AI Survey 2025." hepi.ac.uk. February 2025.

- The Guardian. "Thousands of UK university students caught cheating using AI." theguardian.com. June 2025.

- Taylor & Francis. "Addressing student non-compliance in AI use declarations." Assessment & Evaluation in Higher Education. 2024.

- AllAboutAI. "AI Cheating in Schools: 2026 Global Trends & Bias Risks." allaboutai.com. 2026.

- Programs.com. "New Data: 92% of Students Use AI." programs.com. 2026.

- BestColleges. "Most College Students Have Used AI Survey." bestcolleges.com. 2025.

- arXiv. "Impact of AI on student performance." arxiv.org. 2024.