Accused of AI Use What to Do in the Next 24 Hours is making waves in the AI humanizer space. Accused of AI Use is a question many students are asking right now. An accusation of “AI-written work” can feel like a fire alarm, especially when the only “evidence” you’re shown is a detector score from Turnitin or another tool. The next 24 hours matter because this is when you can preserve proof of authorship, clarify what you did (and did not) do, and respond in a way that protects your credibility.

This guide gives you a practical, time-boxed plan you can follow today, whether you used AI responsibly (within policy) or didn’t use it at all.

What an AI accusation usually means (and what it doesn’t)

In most schools and workplaces, an “AI use” accusation falls into one of these buckets:

- A detector flagged your text as likely AI-generated (often shown as a percentage).

- Your writing “sounds like AI” to a reviewer (generic phrasing, flat structure, unusually polished flow).

- Your process looks inconsistent (no drafts, no notes, no version history, last-minute submission).

What it often does not mean is that the detector has proven authorship.

AI detectors are statistical classifiers. They look for patterns associated with machine-generated text, not a hidden watermark of who typed the words. Even the best-known tools and vendors acknowledge limitations. For example:

- Turnitin’s AI writing detection announcement emphasized high performance claims while still treating results as an indicator for review, not a courtroom verdict (Turnitin press release, 2023).

- OpenAI discontinued its own AI text classifier due to low accuracy in real-world use (OpenAI update).

So your job in the next 24 hours is to shift the conversation from “the score says X” to “here is verifiable evidence of my writing process and a fair way to evaluate it.”

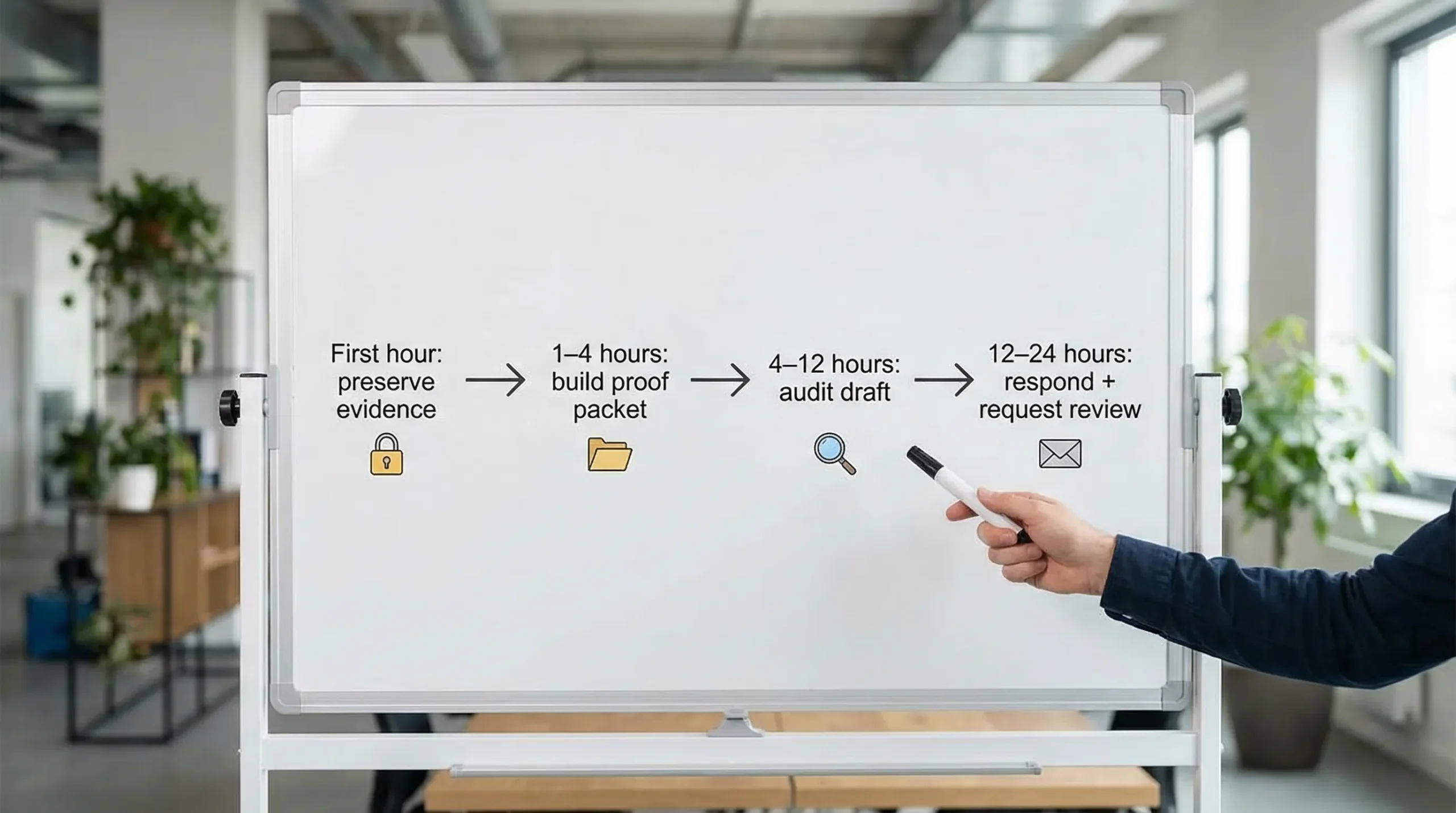

The next 24 hours: a step-by-step action plan

Use the timeline below like a checklist. The goal is to preserve evidence, audit your work, then communicate calmly and specifically.

| Time window | Your priority | What “done” looks like |

|---|---|---|

| First hour | Preserve evidence | Copies saved, version history exported, nothing overwritten |

| Hours 1 to 4 | Build a proof-of-authorship packet | Drafts, notes, sources, timestamps organized in one folder |

| Hours 4 to 12 | Audit for real issues and reduce misunderstandings | Citations checked, paraphrasing cleaned up, detector results interpreted carefully |

| Hours 12 to 24 | Respond professionally and request a fair review | Email sent, meeting requested, materials ready |

First hour: preserve evidence (do not “panic edit”)

The most common self-inflicted problem is immediately rewriting the entire document. If your instructor, editor, or manager already has the original submission, sudden major changes can look like concealment.

Instead, do this:

- Make a read-only copy of the submitted version (PDF export plus an original file export).

- Save your document history:

- Google Docs: take screenshots of Version History and also export versions if possible.

- Microsoft Word: save with tracked changes if you have them, and preserve file metadata.

- Download related artifacts into one folder:

- outlines, handwritten notes photos, research notes

- browser notes, Zotero/Mendeley libraries, annotated PDFs

- drafts you pasted into your document (even partial)

- Write a short timeline from memory (10 minutes max): when you started, when you drafted sections, what sources you used, and what you revised.

If you used any tools (Grammarly, translation support, dictation, or AI for brainstorming), note that now. Don’t guess later.

Hours 1 to 4: build a proof-of-authorship packet

Your best defense is usually not a debate about detector science. It is a clean, organized “process record” that makes authorship easy to verify.

Create a folder named something like Authorship Packet - [Assignment Name] and add:

1) Draft trail

Include anything that shows evolution:

- early outline and revised outline

- partial drafts (even messy ones)

- old exports or autosaves

- tracked changes or comments

2) Research trail

Include:

- a sources list with links (and access dates)

- PDFs you referenced (if allowed)

- notes that map a claim to a source

3) Writing fingerprints

These are small signals that are hard to fake after the fact:

- instructor feedback you implemented (with comments)

- topic-specific decisions (why you chose one framework, one dataset, one quote)

- your own prior writing samples showing a consistent voice

Here’s a simple way to organize evidence so a reviewer can scan it quickly:

| Evidence | How to capture it quickly | What it helps prove |

|---|---|---|

| Version history | Screenshots + timestamps | The text was built gradually |

| Outlines and notes | Export or photos | You planned and synthesized |

| Source annotations | Highlights + margin notes | You engaged with the material |

| Draft iterations | Separate files named by date | Authentic revision process |

| Prior writing samples | Earlier graded work | Voice consistency over time |

Do not fabricate anything. If you don’t have a draft trail, don’t invent one. You can still defend yourself using other methods (see “fair review options” below).

Hours 4 to 12: audit your submission for real issues (and reduce detector triggers the right way)

Even when an accusation is unfair, your document may contain issues that make it look suspicious. Fixing those issues is not “gaming the system”, it’s strengthening the quality and demonstrating good faith.

Check for citation and paraphrasing problems first

A surprising number of “AI” disputes are actually about:

- patchwriting (too-close paraphrase)

- missing citations

- generic summaries without specific evidence

Do a quick pass:

- Ensure every non-obvious claim is sourced.

- Replace vague phrases (“studies show”, “experts believe”) with specific citations.

- Add page numbers for direct quotations if your style guide expects them.

This matters because weak sourcing can make writing look machine-generated even when it isn’t.

Identify “AI-sounding” patterns that honest writers also accidentally produce

Detectors and humans both tend to react to the same surface patterns:

- very even sentence length across paragraphs

- repetitive transitions (Furthermore, Additionally, In conclusion)

- high-level statements with few concrete details

- overly neutral tone that avoids committing to a position

If your writing has these traits, revise for specificity and personal reasoning:

- Add one or two concrete examples that reflect your actual research path.

- Explain why you chose a source or interpretation.

- Vary sentence rhythm naturally (short emphasis sentences are fine).

Use detectors as “signals”, not as the judge

If your case involves Turnitin or another detector score, it can help to run your text through a couple of widely used detectors so you understand what a reviewer might be seeing. Treat this as reconnaissance, not a target to “beat”.

If you want a fast, no-signup way to analyze how text may appear to detection systems, you can use tools and guides on Detection Drama to get an authenticity read and identify patterns that look artificial.

Important boundary: if your institution prohibits AI rewriting or “humanizer” tools, do not run your draft through a bypass tool and then submit the transformed output as original. That creates a second issue (misrepresentation) that is harder to defend than the initial accusation.

Hours 12 to 24: respond professionally and request a fair review

Your message should be calm, brief, and process-focused. You are not trying to “win an argument” in the email. You are trying to secure a fair evaluation method.

Email template you can adapt

Subject: Request for review of AI-authorship concern for [Assignment Name]

Hello [Name],

I’m writing regarding the concern that my submission for [assignment/project] was AI-generated. I take authorship and academic integrity seriously.

I did not intend to violate any policy. I can provide documentation of my writing process, including [version history/drafts/notes/source annotations], and I’m happy to meet to walk through how I developed the work.

Could we schedule a time to review the evidence and discuss an appropriate way to verify authorship (for example, a brief oral review of key sections or an in-person writing sample)?

Thank you, [Your name] [Course/Section or Team]

What to request in the meeting (fair verification options)

If you’re confident you wrote it, propose methods that legitimate authors can pass:

- Oral defense: explain your thesis, sources, and reasoning choices.

- Live writing sample: write a short paragraph on a related prompt under observation.

- Revision session: revise a flagged section in real time, explaining changes.

- Comparative review: compare against prior writing samples and your documented drafts.

These are stronger than arguing about the exact detector percentage.

If you did use AI: what to do (without making it worse)

Many policies do not ban all AI use. They restrict undisclosed or substitutive use (for example, generating the full submission). If you used AI for brainstorming, outlining, grammar, or clarity, your best move is usually controlled transparency.

First, check the actual policy language

Look for specifics like:

- “AI tools permitted for proofreading”

- “AI tools must be cited/disclosed”

- “No generative AI for drafting”

If the rules require disclosure and you didn’t disclose, that is a procedural mistake you can still address.

Explain your AI use precisely

Avoid vague statements like “I only used it a little.” Instead, say something like:

- “I used AI to generate an outline, then wrote the draft myself.”

- “I used AI to suggest alternative phrasing in two paragraphs, and I edited heavily.”

- “I used a grammar tool to correct sentence-level issues.”

Then offer to:

- provide your original notes and drafts

- redo a portion under supervision

- add an AI-use disclosure statement, if that resolves the issue under policy

Do not claim you didn’t use AI if you did. If later evidence contradicts you, the integrity breach becomes the story.

If you did not use AI: how to defend yourself effectively

False positives and subjective suspicion happen, especially when:

- you write in a very formal style

- English is not your first language and you avoid slang

- you produce clean structure (topic sentences, balanced paragraphs)

- you used templates, rubrics, or heavily standardized formats

Your strongest approach is to show a consistent, traceable process.

What to emphasize

- Version history that shows gradual construction (not a single paste).

- Decision points: why you chose specific sources and how they changed your argument.

- Specificity: the small details that reflect real thinking (and imperfections).

- Consistency with prior work: voice, phrasing habits, recurring strengths and weaknesses.

How to talk about detector results without sounding evasive

Good phrasing:

- “I understand detectors can be one signal. I’d like to provide process evidence and complete an oral review to verify authorship.”

Risky phrasing:

- “Turnitin is wrong, so this is unfair.”

You can acknowledge uncertainty while still advocating for a fair method.

Mistakes that commonly cost people their case

- Over-editing immediately after the accusation (looks like cleanup).

- Submitting a newly rewritten version without explaining why.

- Arguing aggressively instead of proposing verification steps.

- Focusing only on the detector’s flaws and providing no authorship evidence.

- Fabricating drafts, notes, or timestamps.

Even if you feel wrongly accused, keep your tone measured. Reviewers are more likely to help when you sound cooperative and organized.

How to prevent this in the future (a simple “proof of authorship” workflow)

You can reduce risk dramatically with lightweight habits that take minutes, not hours.

Write in a tool that preserves history

- Draft in Google Docs or another editor with version history.

- Avoid writing everything in a single final paste.

Keep a tiny “process log”

A simple document with:

- date started

- key sources

- major revision notes

Save your outlines and rough drafts

Messy drafts are valuable evidence. Don’t delete them.

If you use AI, disclose and limit it

- Follow the policy.

- Keep prompts and outputs if disclosure is required.

- Use AI for support tasks (clarity, brainstorming) rather than replacement authorship, unless explicitly allowed.

Do a pre-submission authenticity check (especially for high-stakes work)

Before submitting, run a quick review for:

- generic filler

- missing citations

- repetitive structure

If you want tools that analyze “AI-like” signals and help you revise toward more natural, human-style writing, you can explore the free resources on Detection Drama. Use them to improve clarity and authenticity, and always stay aligned with your institution’s rules.

Accused of AI Use: A final note on strategy — Accused of AI Use What to Do in the Next 24 Hours

In most cases, you do not need to prove that AI detectors are imperfect. You need to prove that your process is credible and that the evaluation should not rest on a single automated score.

If you follow the 24-hour plan, you’ll walk into the conversation with what reviewers rarely see: calm communication, organized evidence, and a reasonable request for verification.