GPTHuman

Undetectable AI

StealthGPT

WriteHuman

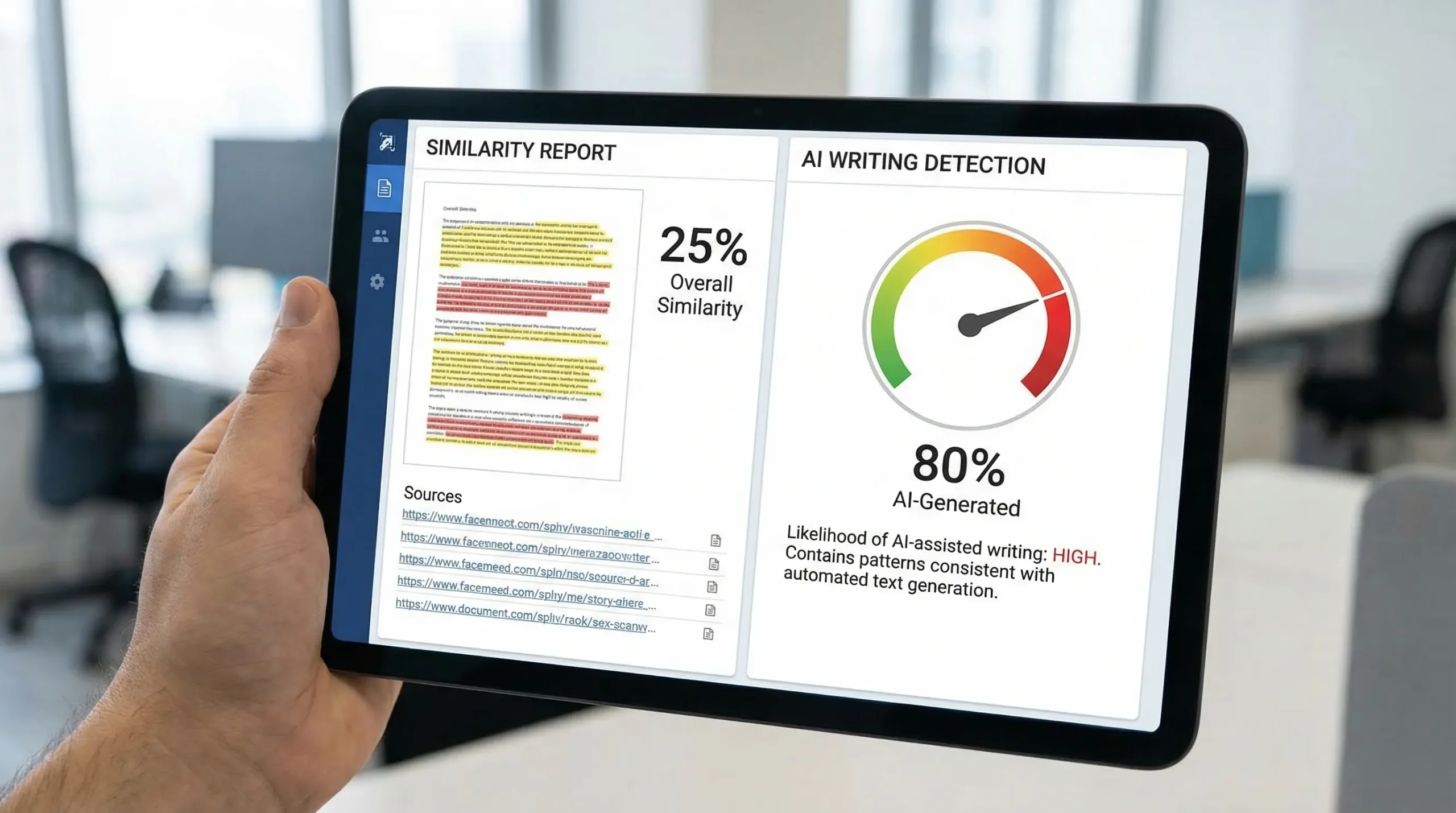

Turnitin AI % vs Similarity — which one actually delivers? Seeing two big numbers in Turnitin (an AI % and a Similarity %) can feel like getting two diagnoses at once. They look comparable, they’re both percentages, and both can trigger anxiety for students, instructors, and even professionals submitting writing samples.

But they are not measuring the same thing at all.

This guide breaks down what Turnitin’s Similarity score actually is, what the AI writing score is trying to estimate, why you can have a high value in one and a low value in the other, and what to do next without guessing.

The simplest difference: “Matches” vs “Authorship signals”

Turnitin’s two percentages come from two different systems:

- Similarity % is a text-matching metric. It compares your document to Turnitin’s comparison databases and shows what portion of your text matches other sources.

- AI % (AI writing indicator) is an authorship-likelihood estimate. It analyzes linguistic patterns and signals that may correlate with AI-assisted writing.

A high Similarity score does not mean “AI,” and a high AI score does not mean “plagiarism.” They can overlap, but they are not interchangeable.

What the Turnitin Similarity % actually measures

Similarity is about overlap with existing text

Turnitin Similarity works like a sophisticated “find matching strings” engine across sources such as:

- Web pages (depending on your institution’s settings)

- Academic publications and journals

- Student paper repositories (depending on licensing and policy)

The output is a Similarity score, plus a report that highlights matched segments and links them to specific sources.

Similarity is not a plagiarism verdict

This is the most misunderstood part.

A Similarity score:

- Does not know intent (copying vs properly quoted)

- Does not understand context (standard phrases in a field, assignment templates, common definitions)

- Does not evaluate citation quality by itself

That is why institutions typically treat Similarity as a screening signal, then a human reviews the highlighted matches.

Why Similarity can be high even when you wrote everything yourself

Common legitimate causes include:

- Quotations (especially if you quote multiple lines)

- Bibliography / references being matched

- Assignment cover pages and templates used by many students

- Method sections in lab reports (often similar phrasing is unavoidable)

- Legal or policy language that must be exact

Many Turnitin setups allow reviewers to exclude quotes and bibliographies, and to ignore very small matches. These settings can change the percentage significantly without changing your actual writing.

What the Turnitin AI % is (and what it is not)

AI % is an indicator, not a source match

Turnitin’s AI writing indicator does not work by finding your text somewhere else online.

Instead, it tries to answer a different question:

“Does this writing contain patterns that look more like AI-generated text than typical human writing?”

That means two important things:

- It can flag original writing that “looks AI-like.”

- It can miss AI-assisted writing that has been heavily edited or is short, messy, or highly personal.

Why AI scores can be wrong (in both directions)

AI detection is probabilistic. False positives and false negatives are possible, especially when writing:

- Is very formal, generic, or “essay-shaped”

- Uses repetitive sentence structures

- Was heavily grammar-corrected

- Was translated (or written by a non-native speaker with standardized phrasing)

- Is very short (many detectors perform worse on short samples)

This is why most academic integrity policies, and most responsible instructors, treat the AI indicator as one input, not a stand-alone conclusion.

Turnitin AI % vs Similarity %: side-by-side comparison

| Metric in Turnitin | What it measures | What it compares against | What a high score can mean | What it does not prove |

|---|---|---|---|---|

| Similarity % | Text overlap | Turnitin databases + web sources (varies by license/settings) | You share wording with existing sources | Intentional plagiarism or AI use |

| AI % | Likelihood of AI-assisted writing | A detection model’s learned patterns | Your writing resembles AI-generated style patterns | Plagiarism, misconduct, or that you used AI |

How to interpret common score combinations

High Similarity %, low AI %

This often means “matching text,” not “machine-written text.” Typical cases:

- Lots of quotes (properly cited, but long)

- Too much copy-pasted source material even if cited (still an academic issue in many rubrics)

- Reference list or appendix getting counted

- Reused boilerplate (lab methods, policy excerpts)

What to do: inspect the Similarity Report highlights. If the matches are mostly quotes/references, ask whether the reviewer can exclude them. If the matches are in your paraphrases, revise for originality and ensure citations are correct.

Low Similarity %, high AI %

This is the combination that confuses people most. It usually means:

- Your text is not copied from sources (good), but it reads like AI in style (maybe)

- You used an AI assistant and then rewrote enough to avoid matching sources (possible)

- You wrote it yourself, but it is very generic, evenly structured, and low in personal reasoning (also possible)

What to do: focus on authorship evidence and revision quality. Add genuine analysis, specific examples, and your own voice. Keep drafts and notes.

High Similarity %, high AI %

This can happen when someone:

- Generates text with AI and also copies chunks from sources

- Uses AI to paraphrase too closely to the original sources

- Includes large quoted or template sections plus AI-sounding filler

What to do: treat it like two separate problems. Fix matching text first (citations, quoting, paraphrase quality), then address style and authorship transparency.

Low Similarity %, low AI %

This is typically the “green zone,” but it still does not guarantee the writing meets the assignment rules. For example, you can have low Similarity and low AI but still have weak sources or incorrect citations.

Why your Turnitin percentages can change between uploads

If you are seeing different AI or Similarity percentages across submissions, it does not necessarily mean you did something wrong.

Common reasons include:

- Different Turnitin settings (exclude bibliography, exclude quotes, small match filters)

- Database updates (new web pages indexed, new student papers added)

- Document formatting changes (headings, tables, footnotes, citations)

- Section changes (editing intros and conclusions can swing AI indicators more than you expect)

If you’re comparing two reports, compare them under the same settings and check which sections changed.

GPTHuman

Undetectable AI

StealthGPT

WriteHuman

What to do if you want to lower Similarity the right way

Similarity is the easier number to improve ethically because it is tied to visible overlaps.

The goal is not “0%.” The goal is: matches are expected, explained, and properly used.

Practical fixes that usually help:

- Replace patchwork paraphrasing with true paraphrasing (read, close the source, then explain in your own structure).

- Quote only what must be exact, and keep quotes short.

- Make sure every borrowed idea is cited, not just direct quotes.

- Separate references/appendices clearly if your institution counts them.

- Avoid copying definitions when a one-sentence explanation works.

If you are in a technical field where phrasing is standardized, ask your instructor what Similarity range is normal for your assignment type.

What to do if your AI % is high (without playing whack-a-mole)

A high AI indicator is best handled with process transparency and authentic revision, not panic rewriting.

Build authorship evidence

If your school or organization asks questions, being able to show your process is often more persuasive than trying to “optimize” a score:

- Keep outlines, notes, and source annotations

- Save draft versions (even rough ones)

- Be prepared to explain key arguments and why you chose sources

Revise for human clarity and specificity

AI-like writing tends to be smooth but generic. Human writing tends to include lived context, concrete choices, and unevenness that comes from real thinking.

Revisions that often help:

- Add specific examples, numbers, or case details that you can defend

- Replace broad claims with sourced statements

- Vary sentence length naturally (without forcing it)

- Remove “filler transitions” that sound polished but add no meaning

If you used AI assistance, align with your policy

Different institutions treat AI differently. Some allow it for brainstorming or grammar, some require disclosure, and some ban it.

If you’re unsure, check your syllabus or academic integrity policy. If allowed, consider adding a brief disclosure (where appropriate) that clarifies how you used AI (for example, “outline assistance” or “grammar suggestions”).

A quick note for job seekers and professionals

Turnitin is mainly an academic tool, but AI concerns have spilled into hiring too. Writing tests, take-home assignments, and content roles increasingly evaluate whether your work reflects your own thinking.

If you are applying for roles where writing credibility matters (marketing, client services, leadership communications), focus on producing drafts that show a clear point of view and domain understanding. Agencies that recruit for senior and business-critical roles, like Optima Search Europe (international recruitment agency), often emphasize candidate quality and communication fit, and those are hard to fake with generic AI text.

Similarity and AI are different, but they interact in one important way

Even though Similarity and AI scores are separate, one can influence how the other is interpreted by a reader.

For example:

- A paper with high Similarity might trigger closer scrutiny of AI indicators, even if AI is low.

- A paper with high AI might prompt an instructor to check whether citations and paraphrases are authentic, even if Similarity is low.

So the best strategy is not chasing the lowest numbers, it is producing work that is:

- properly sourced

- genuinely argued

- consistent with your institution’s rules

- defensible if questioned

Frequently Asked Questions

Is Turnitin Similarity the same as plagiarism? No. Similarity shows text overlap with sources. Plagiarism is a human judgment based on how and why that overlap exists (and whether it is properly cited).

Can I have 0% Similarity in Turnitin? You can, but it is not always realistic or even desirable. Properly quoted material, references, and common phrases can create matches. Many assignments naturally produce some similarity.

Does a high Turnitin AI % prove I used ChatGPT? No. The AI % is an indicator based on patterns, not proof. It can be wrong, and it does not identify a specific tool.

Why did my Similarity % change after resubmitting the same paper? Settings (exclude quotes/bibliography), database updates, or formatting changes can shift the percentage. Compare reports under the same filters.

What matters more to instructors: AI % or Similarity %? It depends on the class policy and the assignment. Many instructors treat both as signals and then evaluate evidence like drafts, citations, and your ability to explain the work.

Want a clearer read on what Turnitin is reacting to?

If you’re trying to understand why a draft is triggering AI concerns, or you want to reduce detector risk while keeping your meaning intact, Detection Drama publishes AI detection bypass guides and offers a free humanizer tool you can use instantly (no email required).

Explore resources at DetectionDrama.com to analyze your text, review detection signals, and improve readability before you submit.