Why Turnitin Flags AI When Other Detectors Don’t is making waves in the AI humanizer space. Why Turnitin Flags AI When Other Detectors is a question that comes up frequently among students and educators. Why Turnitin Flags AI When Other is a question many students are asking right now. If you have ever asked, “why does Turnitin flag AI when others don’t?”, you are not alone. It is a common (and frustrating) outcome: a text that looks “Human” in GPTZero, ZeroGPT, or other popular scanners shows a meaningful AI percentage inside Turnitin.

This mismatch usually does not mean Turnitin is “better” or that the other tools are “broken.” It means you are comparing systems that:

- use different models and training data

- measure different signals (and at different text lengths)

- apply different thresholds based on different risk tolerance

- behave differently on academic writing, templates, and edited AI drafts

Below is a practical, non-hype breakdown of what is actually happening, why Turnitin often stands out, and what you can do if your writing is being flagged.

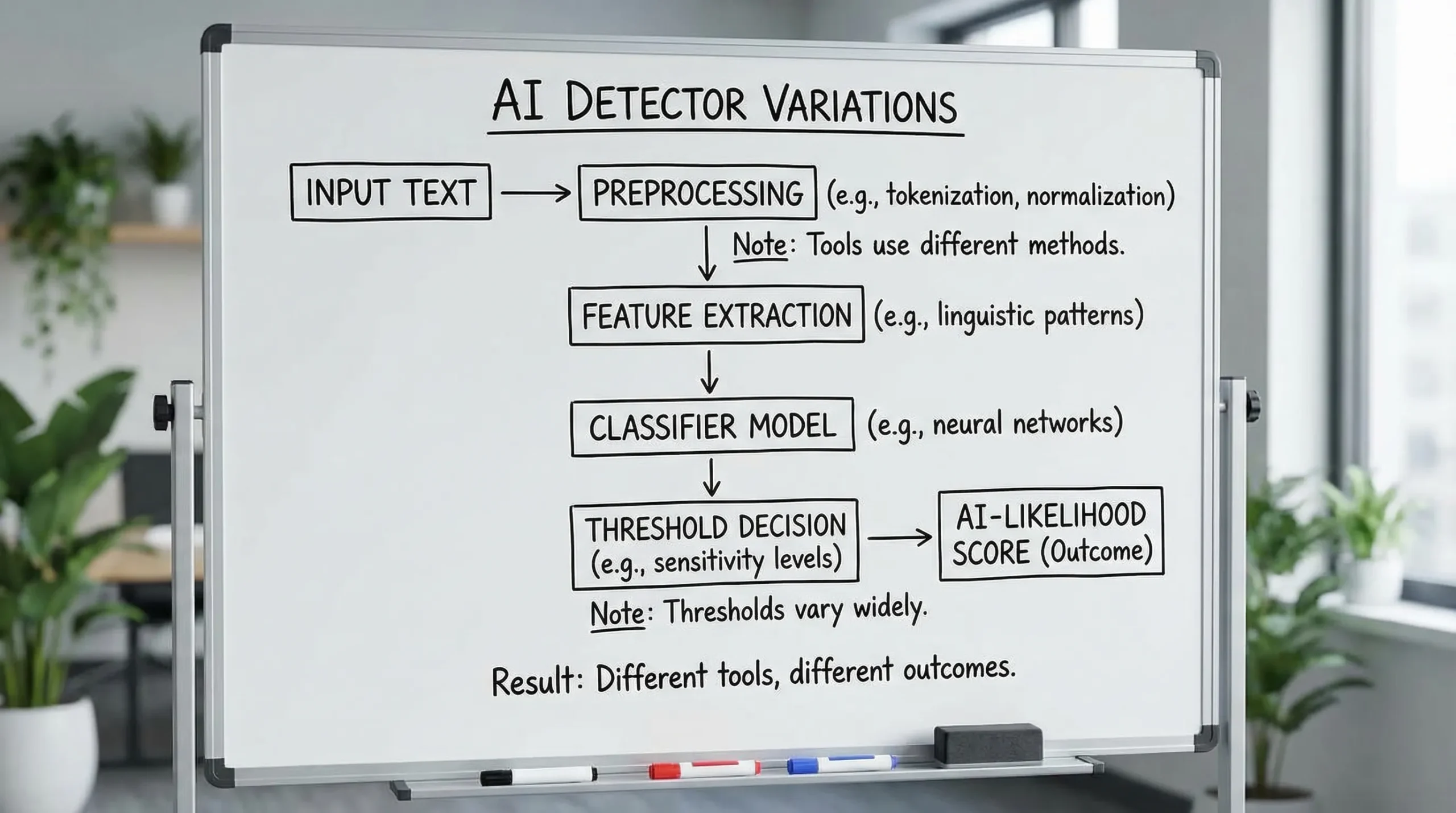

Why Turnitin Flags AI When Other Detectors: The key idea: AI detectors do not agree because they are not measuring the same thing AI detection is not like plagiarism matching, where the same copied sentence can often be verified across tools. Most AI detectors are classifiers that estimate how “AI-like” a passage is based on statistical cues (predictability, uniformity, distribution of tokens, and other features). That output is probabilistic. It is not proof of authorship. If you want a clear explanation of the limits here, this overview on what AI detectors can’t prove is worth reading. The short version is that detectors can generate a score, but they cannot definitively prove who wrote the text from the text alone. So when two tools disagree, it is usually because each tool is: looking at different features weighting features differently using different minimum-length rules tuned to minimize different types of errors Why Turnitin Flags AI When Other — Why Turnitin is more likely to flag Turnitin operates in a high-stakes environment (education) where the consequences of missing AI-assisted writing can be significant. That reality influences how the system is tuned. Here are the most common reasons Turnitin will flag AI when other detectors don’t. 1) Turnitin is optimized for academic-style writing (where “AI-like” patterns are common) A lot of academic writing is naturally “predictable”: structured introductions (context, gap, thesis) cautious hedging (“suggests,” “may indicate,” “it is important to note”) formal transitions (“moreover,” “in contrast,” “therefore”) balanced conclusions Those are also patterns that appear frequently in AI-generated essays. Some consumer detectors lean heavily on surface-level “ChatGPT vibes” (overly clean flow, certain phrase patterns). Turnitin’s academic focus can make it more sensitive to the kinds of regularity and structure that exist in real student writing too, which can raise false positives. 2) Different thresholds (sensitivity vs specificity) Every detector makes a tradeoff: Higher sensitivity catches more AI-assisted text, but increases false positives. Higher specificity reduces false positives, but misses more AI-assisted text. Turnitin’s threshold choices can feel stricter compared to tools designed for casual checks. Even when two detectors use similar underlying signals, a different cutoff can flip the label. 3) Turnitin often evaluates longer documents and more contiguous context Many free scanners behave differently depending on how much text you paste. Short passages can look “human” because there is not enough signal. Long, uniform passages make patterns easier to detect. Turnitin is frequently applied to full submissions (multi-page essays, reports, discussion posts). That longer context can increase the confidence of its classifier, especially if the writing has consistent rhythm and low variance across paragraphs. 4) Preprocessing differences (quotes, citations, formatting) can change the score Detectors do not always “see” your document the same way. Examples of preprocessing differences that can swing results: whether in-text citations are removed or retained how block quotes are handled whether references/bibliography sections are included how headings, bullet points, and tables are treated how footnotes are interpreted If one tool strips references and another includes them, you are not comparing the same input. In practice, Turnitin-style workflows can treat academic formatting as part of the analysis, which sometimes increases confidence in either direction. 5) Turnitin may be more robust to “light editing” that fools simpler detectors A common pattern is: generate a draft with an LLM replace a few words add citations shuffle a few sentences Some scanners drop their AI score quickly after surface edits. Others remain suspicious because deeper signals remain (sentence structure uniformity, low burstiness, consistent cadence, constrained vocabulary distribution). Turnitin can be harder to move with cosmetic changes alone. 6) Model drift and update cadence AI detection changes fast: LLMs change student writing changes (because people edit AI drafts) detectors update their models Two tools tested on the same day can be on very different versions of their detection approach. So a mismatch can be “real” even if nothing about your writing changed. A quick comparison: Turnitin vs “web” AI detectors — Why Turnitin Flags AI When Other Detectors Don’t

This table is simplified, but it helps explain why disagreement is normal.

| Factor | Turnitin (typical use case) | Many online AI detectors (typical use case) |

|---|---|---|

| Primary environment | Academic integrity workflows | General-purpose checking (students, creators, SEO) |

| Document length | Often full submissions | Often pasted excerpts |

| Risk tolerance | Tends to prioritize catching AI | Often prioritizes fewer false positives |

| Formatting/citations | Common in input | Sometimes stripped or inconsistently handled |

| Adversarial edits | Assumes students may edit AI drafts | Often weaker to light paraphrasing |

| Output meaning | Indicator, not proof | Indicator, not proof |

Why Turnitin Flags AI When Other Detectors Don’t: The most common “false positive” triggers in Turnitin

Even if you wrote the text yourself, these patterns can push a detector toward “AI-like.”

Highly standardized writing (templates, lab reports, case notes)

If your assignment naturally forces rigid structure, the writing may become statistically uniform.

Examples:

- lab reports with repeated phrasing (“The results show…”)

- nursing or social work case notes

- business memos

- research summaries

These genres reduce stylistic variation, and low variation is often interpreted as “model-like.”

Heavy paraphrasing and over-polishing

Ironically, aggressive paraphrasing can make writing look more algorithmic:

- sentences become similar length

- vocabulary becomes “thesaurus-clean”

- transitions become overly consistent

A human draft with natural roughness (some short sentences, some long, occasional idiosyncratic phrasing) can look more human than a heavily polished version.

Non-native English patterns (or forced “formal academic English”)

Non-native writers often rely on safe constructions and predictable phrasing. So do AI models. That overlap can increase false positives.

Overuse of safe, generic claims

Text that avoids specifics can look AI-like:

- few concrete details

- minimal personal reasoning steps

- vague evidence (“studies show…”) without clarifying which study

- conclusions that restate rather than argue

This is fixable without “bypassing” anything. Adding specificity and showing reasoning usually helps.

What to do if Turnitin flags your writing (practical, defensible steps)

If your goal is academic compliance, the safest strategy is to make your work more demonstrably yours, not just “less detectable.”

Keep authorship evidence (this matters more than any score)

If you are challenged, evidence beats arguments about detectors.

Good artifacts to keep:

- outline versions (even rough)

- notes and sources you actually read

- drafts with timestamps (Google Docs version history is useful)

- instructor feedback iterations

- screenshots of your research trail (optional)

Improve “human signal” the right way: add reasoning, specificity, and constraints

Instead of trying to “beat” a detector with random synonym swaps, revise like a strong writer:

- Add at least 2 to 3 concrete examples that are specific to the prompt

- Explain why a point is true, not just that it is true

- Replace generic transitions with logical ones (“Because X, Y follows…”)

- Vary sentence length naturally (do not force it, just avoid monotony)

- Use discipline-appropriate language, including limitations and tradeoffs

Re-check the same text consistently across tools

If you want to compare detectors, make the comparison fair:

- Use the same exact text

- Use a similar length (do not test 200 words in one tool and 2,000 in another)

- Decide whether references are included, then keep that consistent

Avoid the biggest “AI draft” giveaway: uniformity across the whole document

Turnitin can react to documents that feel stylistically flat from start to finish.

A practical edit approach:

- tighten one paragraph aggressively

- let another paragraph be slightly more conversational (still academic)

- include at least one paragraph that shows your own reasoning steps

That variation is normal for humans.

If you used AI assistance, follow your institution’s policy

Some schools allow AI for brainstorming or grammar, others do not.

If AI use is permitted, the best outcome is often transparency:

- disclose how you used it (brainstorming vs drafting)

- confirm you verified facts and wrote the final text

If it is not permitted, the only defensible path is to write the work yourself.

Why “beating Turnitin” is not a stable goal (even if a tool works today)

Turnitin and other systems update. Methods that lower detection scores can stop working overnight.

Also, detection is not the only risk. Many instructors evaluate:

- whether you can explain your argument in person

- whether your citations match your claims

- whether your style matches prior submissions

So even if a detector score goes down, inconsistency can raise other flags.

Where Detection Drama fits (and how to use it responsibly)

DetectionDrama is built for people who want clearer answers and practical tools when detectors disagree. If you are dealing with a Turnitin AI flag, a useful workflow is:

- run an authenticity analysis to see which sections read as most “AI-like”

- revise those sections for specificity and reasoning (not just synonyms)

- only then consider a humanization pass for flow and naturalness

You can start with the free resources and instant-access tools at DetectionDrama (no email required). Just keep expectations realistic: no detector bypass is guaranteed long term, and policy compliance matters more than any score.

Frequently Asked Questions

Why does Turnitin flag AI when GPTZero says human? Turnitin and GPTZero use different models, preprocessing, and thresholds. Turnitin is often tuned for academic submissions and longer documents, which can amplify “AI-like” signals.

Does a Turnitin AI score prove I used ChatGPT? No. AI scores are probabilistic indicators, not proof of authorship. A high score can be caused by uniform, template-like writing, heavy paraphrasing, or other statistical patterns.

What parts of an essay trigger Turnitin AI detection most? Common triggers include long uniform paragraphs, generic claims without specific reasoning, overly polished paraphrasing, and standardized academic templates (lab reports, summaries).

Can citations and references affect AI detection? They can, depending on how a detector preprocesses the document. If one tool includes references and another strips them, you can see different scores on the “same” paper.

What is the safest way to respond to a Turnitin AI flag? Keep drafting evidence (notes, outlines, version history), revise for specificity and clear reasoning, and follow your institution’s AI policy. Evidence and clarity matter more than arguing about detector accuracy.

Try a calmer, more reliable workflow than “checking random detectors”

If you are stuck between conflicting AI detector results, use a workflow that shows you where the text looks AI-like and why, then fix those sections intentionally. DetectionDrama offers free guides and instant tools to help you analyze and rewrite toward more natural, human-like writing, especially when Turnitin is the one raising the flag.

Start here: DetectionDrama.