AI Detection Lawsuits: Every Student Case, Outcome, and What the Data Shows (2026)

KEY TAKEAWAYS

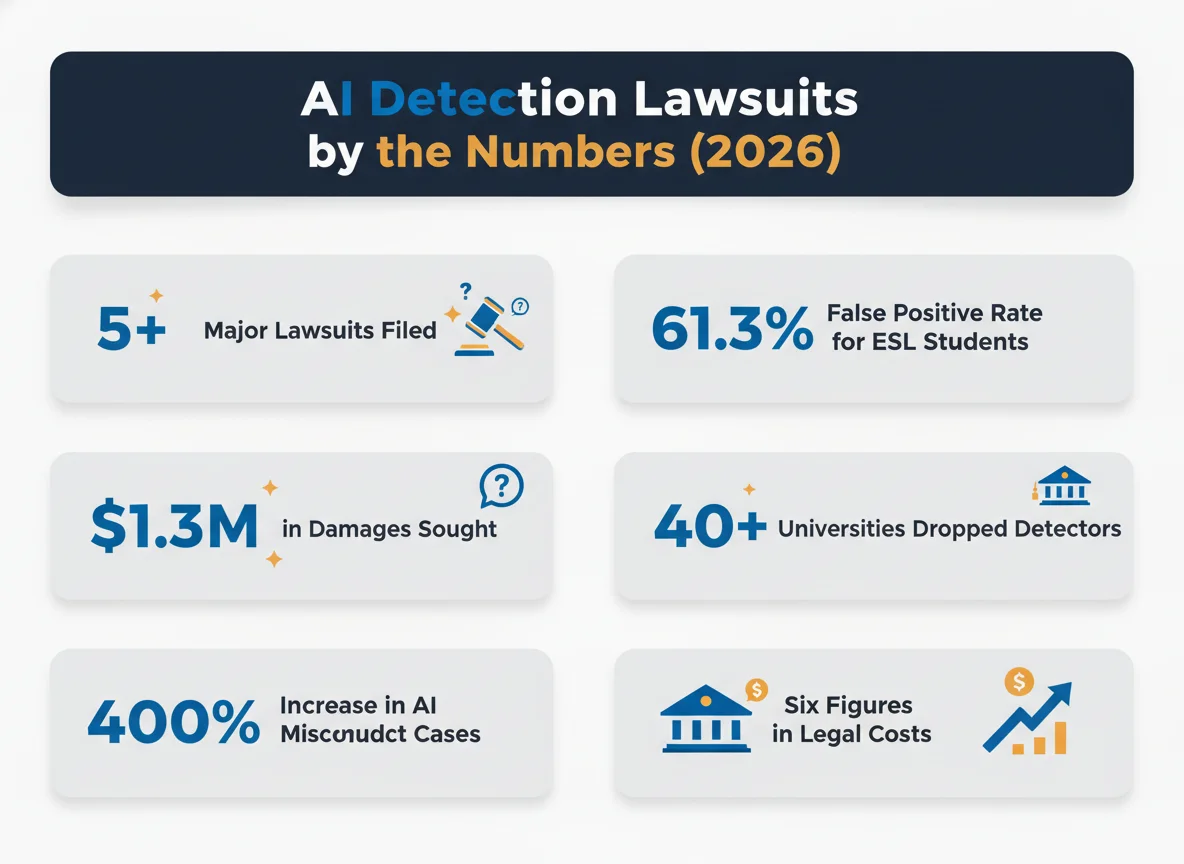

- → At least 5 federal lawsuits have been filed by students against educational institutions over AI detection accusations since 2024 (Court records)

- → Orion Newby won a landmark ruling against Adelphi University in February 2026 — a federal judge called the AI plagiarism finding "without merit" (Inside Higher Ed)

- → Weber-Wulff et al. tested 14 detection tools: none exceeded 80% accuracy (Int. Journal for Educational Integrity, 2023)

- → Stanford researchers found detectors falsely flagged 61.3% of non-native English speaker essays as AI-generated (Liang et al., 2023)

- → AI misconduct cases increased ~400% — from 1.6 to 7.5 students per 1,000 between 2022–23 and 2024–25 (Turnitin)

- → Over 40 universities including MIT, Yale, Johns Hopkins, and Berkeley have dropped AI detection tools (Multiple sources)

- → The Newby family spent six figures in legal fees to overturn a single false plagiarism charge (Inside Higher Ed, CBS News)

A new category of education litigation is taking shape in federal courts across the United States. Students accused of using AI on academic work — based on the output of detection tools whose accuracy has been challenged by peer-reviewed research — are filing lawsuits against their institutions. These cases raise fundamental questions about due process, the reliability of AI detection technology, and whether universities can stake academic careers on tools that researchers have shown produce unacceptable error rates, particularly for non-native English speakers who face disproportionate false positive rates.

This article compiles every known student lawsuit filed over AI detection accusations, tracks their outcomes, and contextualizes them against the scientific literature that plaintiffs are citing in court. Whether you are a student facing an accusation, an administrator evaluating detection tools, or a researcher tracking this emerging legal landscape, this is the most comprehensive dataset available.

1 The Complete AI Detection Lawsuit Database

At least five federal cases have been filed since September 2024. One resulted in a student victory, two saw courts side with the institution on procedural grounds, and two remain pending. Common claims include due process violations, discrimination against non-native English speakers, and reliance on scientifically unreliable detection tools.

| Case | Institution | Filed | Detection Tool | Status | Damages Sought |

|---|---|---|---|---|---|

| Newby v. Adelphi University | Adelphi University | 2025 | Turnitin AI | Won | Not disclosed |

| Doe v. Yale University | Yale SOM (EMBA) | Feb 2025 | GPTZero | Pending | Unspecified economic losses |

| Yang v. Univ. of Minnesota | Univ. of Minnesota | Jan 2025 | GPTZero + faculty | Dismissed* | $1.335M combined |

| Harris v. Hingham Schools | Hingham High School | Sep 2024 | Teacher assessment | Pending | Not disclosed |

| Doe v. Univ. of Michigan | Univ. of Michigan | Early 2026 | Instructor judgment | Pending | Unspecified |

*Dismissed without prejudice — court found the university followed its procedures, but the student may refile.

The pattern across these cases is striking. Three of the five plaintiffs are non-native English speakers — a detail that aligns directly with peer-reviewed research showing AI detectors produce dramatically higher false positive rates for this population. Several plaintiffs have cited the same studies in their filings, and the question of whether detectors constitute reliable evidence is becoming central to how courts evaluate these disputes. Students who have been accused of AI use are increasingly finding that legal action is their only recourse when internal appeals fail.

2 Adelphi University: The First Student Victory Over AI Detection

In February 2026, Orion Newby became the first student to win a federal lawsuit over a false AI plagiarism accusation. A judge ruled the finding was "without merit" after Turnitin flagged his World Civilizations paper as fully AI-written, despite two other detectors clearing it.

| METRIC | VALUE | SOURCE |

|---|---|---|

| Detection tool used | Turnitin AI Detector | Court filing |

| Turnitin AI score | 100% AI-written | Inside Higher Ed |

| Other detectors' result | Human-written | CBS News |

| Family legal costs | Six figures | Inside Higher Ed |

| Court ruling | "Without merit" | Federal court |

| Charge removed from record | Yes | Court order |

Newby was a first-semester sophomore studying history at Adelphi University when Turnitin's AI detector flagged his Christianity and Islam paper as entirely machine-generated. The university denied his appeal, prompting his parents to pursue legal action. Former U.S. attorney Andrew Lelling called the court's opinion "groundbreaking," noting it established that students deserve due process before AI detection evidence is used to impose academic penalties. The case has implications for how universities handle Turnitin AI false positives — a problem the site has documented extensively. Adelphi's policy notes that students face suspension or expulsion after two plagiarism offenses, meaning a single false positive could have cascading consequences.

3 The Research That Students Are Citing in Court

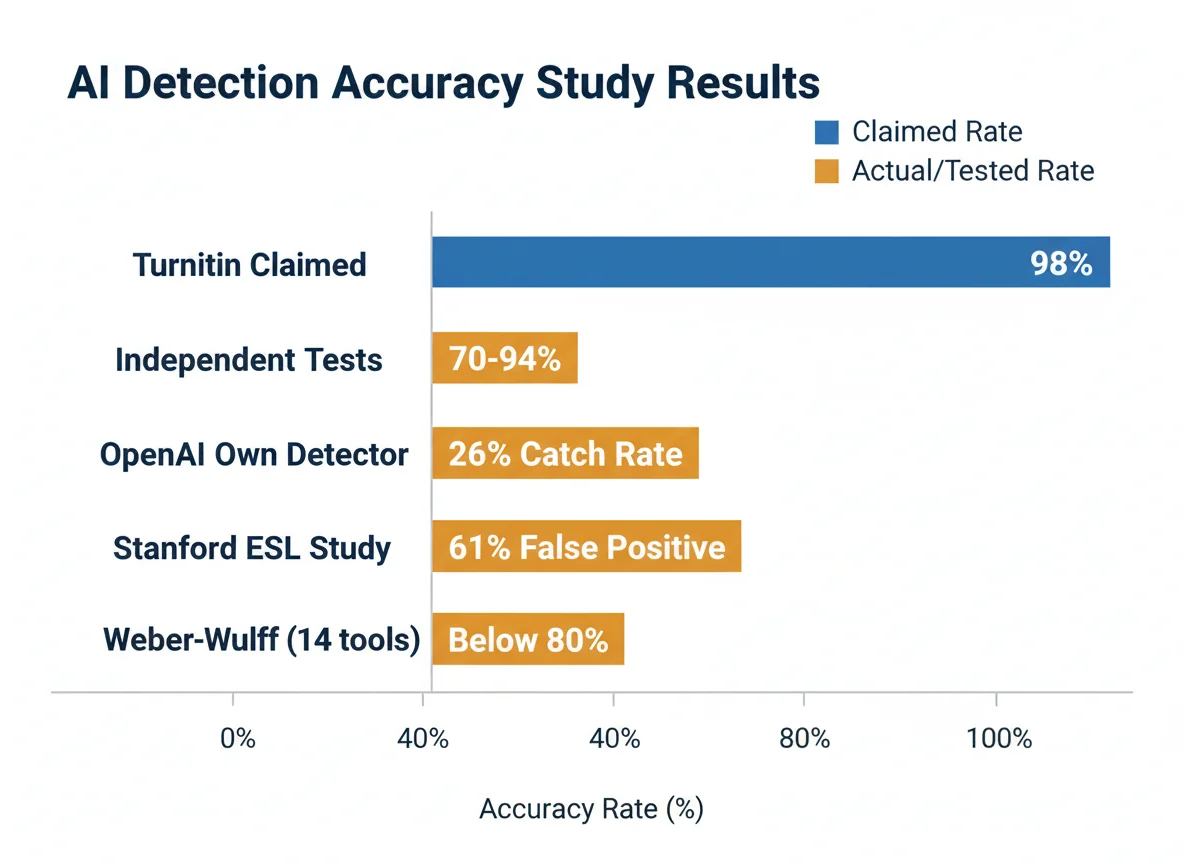

Three major studies form the scientific backbone of these lawsuits: Weber-Wulff (2023) showing no tool exceeds 80% accuracy, the Stanford ESL bias study finding 61.3% false positives, and OpenAI's own admission that their detector caught only 26% of AI text before they shut it down.

| STUDY | KEY FINDING | YEAR |

|---|---|---|

| Weber-Wulff et al. | 14 tools tested, none >80% accurate | 2023 |

| Liang et al. (Stanford) | 61.3% false positive rate on ESL essays | 2023 |

| OpenAI Classifier | 26% catch rate, 9% false positive; shut down | 2023 |

| Turnitin self-report | 98% accuracy claim, <1% false positive claim | 2023 |

| Independent testing (2026) | Real-world accuracy 70–94% depending on tool | 2026 |

| Stanford ESL detail | 97.8% of TOEFL essays flagged by ≥1 detector | 2023 |

The Liang study is particularly relevant to the Yale and Minnesota cases. Thierry Rignol (Yale) is a French national whose lawsuit specifically argues that GPTZero is biased against non-native English speakers. Haishan Yang (Minnesota) is a Chinese international student who made similar claims. Both cited the Stanford findings. The technical explanation centers on "perplexity" — the metric most detectors use to score text. Non-native speakers tend to use more predictable sentence structures and simpler vocabulary, which detectors interpret as machine-like patterns. This is the same reason Turnitin may be detecting patterns rather than actual AI use. In the Rignol case, the plaintiff even ran text by Yale President Peter Salovey through GPTZero and demonstrated it was flagged as AI-generated, undermining the tool's reliability as evidence.

4 AI Misconduct Cases Surged 400% in Two Years

Universities reported a nearly 400% increase in AI-related academic misconduct cases between 2022–23 and 2024–25, rising from 1.6 to 7.5 students per 1,000. UK institutions logged nearly 7,000 AI cheating cases in 2023–24 alone — a threefold year-over-year increase.

| METRIC | VALUE | SOURCE |

|---|---|---|

| AI misconduct rate (2022–23) | 1.6 per 1,000 students | Turnitin data |

| AI misconduct rate (2024–25) | 7.5 per 1,000 students | Turnitin data |

| UK AI cheating cases (2023–24) | ~7,000 | UK university data |

| Teacher detection tool adoption | 68% | 2024–25 survey |

| AI-related % of all misconduct | 60–64% | Global institutions, 2025 |

| Universities dropping detectors | 40+ | Multiple sources |

The surge in misconduct cases is happening simultaneously with a divergence in institutional strategy. Some universities are doubling down on detection tools despite the research, while others are abandoning them. The list of universities that have banned AI detectors continues to grow, including institutions like the University of Waterloo, which discontinued Turnitin's AI detection after it flagged human text as "100% generated by AI." Meanwhile, MIT's official guidance states that AI detection tools should not be used as the sole basis for academic misconduct charges. This institutional split creates a patchwork where students at one university face expulsion for the same work that would go unquestioned at another — a situation the lawsuits are increasingly forcing courts to address.

5 The Financial Cost of Fighting a False AI Accusation

Students and families face substantial financial burdens when challenging AI detection accusations. The Newby family spent six figures in legal fees. Haishan Yang is seeking $1.335 million in combined damages. University spending on the detection tools themselves runs $2,768 to $110,400 per year per institution.

| COST CATEGORY | AMOUNT | SOURCE |

|---|---|---|

| Newby family legal fees | Six figures ($100K+) | Inside Higher Ed |

| Yang federal lawsuit damages | $575,000 | Court filing |

| Yang defamation lawsuit damages | $760,000 | Court filing |

| University AI detection spend (range) | $2,768–$110,400/yr | GradPilot |

| CSU system Turnitin AI add-on | $163,000 extra (2025) | CalMatters |

| California institutions total | $15M+ on Turnitin | CalMatters/Markup |

The financial asymmetry is stark. Universities spend thousands to tens of thousands on detection tools, while students who challenge false results may spend six figures defending themselves in court. Yang's case illustrates the compounding costs: expelled from his PhD program, he lost his student visa and faces potential inability to re-enter the United States. The financial and personal stakes extend far beyond grades — the anxiety and stress caused by AI detection can have lasting effects on students' mental health and career trajectories. For international students specifically, a false AI accusation doesn't just threaten a grade — it threatens their legal right to remain in the country. This is part of why the distinction between AI scores and similarity scores matters so much in practice.

6 What Courts Have Decided So Far

Courts are producing mixed results. The Adelphi ruling favored the student, finding the AI plagiarism charge "without merit." But judges have also deferred to university procedures in the Minnesota and Hingham cases, suggesting that institutions with documented processes may withstand legal challenges even when the underlying detection technology is flawed.

| CASE | COURT ACTION | KEY LEGAL REASONING |

|---|---|---|

| Newby v. Adelphi | Ruled for student | Plagiarism finding "without merit" |

| Doe v. Yale | Injunction denied (May 2025) | Court deferred to internal process |

| Yang v. U of Minnesota | Dismissed without prejudice | University followed its procedures |

| Harris v. Hingham | Injunction denied (Nov 2024) | School "reasonably concluded" violation |

| Doe v. U of Michigan | Filed early 2026 | Claims disability discrimination, due process |

The early legal pattern suggests two tracks. Where universities failed to follow their own procedures or relied on unreliable tools as sole evidence (Adelphi), courts have sided with students. Where institutions documented a formal process with notice, hearings, and appellate review (Minnesota), courts have been more deferential — even when the detection methodology itself was questionable. This means that understanding how to address AI scores proactively and gathering evidence to clear a false flag remain critical for students. The Michigan case introduces a new dimension: disability discrimination. "Jane Doe" argues that her generalized anxiety disorder and obsessive-compulsive disorder produce a more formal writing style that detectors misidentify as AI-generated. If successful, this could establish that AI detection tools have a disparate impact on neurodivergent students — a claim backed by data showing normal writing habits that trigger AI flags.

AI Detection Lawsuit Risk Assessment

Methodology

This article compiles data from federal court filings, peer-reviewed research, and investigative journalism. All lawsuit details are sourced from published court documents and verified reporting from outlets including Inside Higher Ed, Yale Daily News, MPR News, Poets&Quants, and CBS News.

- Sources consulted: 25+ across court filings, academic studies, and news reporting

- Data range: September 2024 – April 2026

- Last verified: April 14, 2026

- Update schedule: Updated as new cases are filed or existing cases are resolved

- Limitations: Some lawsuits are filed under pseudonyms; settlement terms may not be public. This database covers known federal cases and may not include state-level or unreported disputes.

Frequently Asked Questions

Can you sue a university for a false AI detection accusation?

Yes. Multiple students have filed federal lawsuits against universities over AI detection accusations, citing due process violations and discrimination. The Adelphi University case (Newby, 2026) resulted in a federal judge ruling the plagiarism finding "without merit." Common legal claims include violation of the 14th Amendment's due process clause, breach of contract, and discrimination under Title VI. Consult an education attorney for case-specific advice.

How much does it cost to fight an AI detection accusation in court?

Legal costs are substantial. The Newby family reported spending six figures in legal fees to fight their son's false AI plagiarism charge at Adelphi University. Haishan Yang is seeking over $1.3 million in combined damages across two lawsuits against the University of Minnesota. Before pursuing litigation, students should exhaust internal appeals — this is important for proving authorship through documentation.

Are AI detectors accurate enough for academic discipline?

Research suggests they are not reliable enough to serve as sole evidence. Weber-Wulff et al. (2023) tested 14 detection tools and none exceeded 80% accuracy. The Stanford study by Liang et al. (2023) found that detectors falsely flagged 61.3% of essays by non-native English speakers. OpenAI shut down its own classifier after it caught only 26% of AI text while producing a 9% false positive rate.

What legal arguments do students use in AI detection lawsuits?

Common claims include due process violations (inadequate notice, denial of evidence access, biased hearings), discrimination against non-native English speakers under Title VI, breach of contract (university failing to follow its own policies), and defamation. The Yale case specifically argued that GPTZero use violated the university's own policies against unreliable detection tools.

Which universities have been sued over AI detection?

As of April 2026, federal lawsuits have been filed against Adelphi University (resolved in student's favor), Yale University (pending), University of Michigan (pending), and the University of Minnesota (dismissed without prejudice). A separate case was filed against the Hingham, Massachusetts school district. More cases are expected as AI misconduct charges continue rising.

Has any student won an AI detection lawsuit?

Yes. In February 2026, Orion Newby won his case against Adelphi University when a federal judge ruled the AI plagiarism finding was "without merit." The charge was removed from his record. Former U.S. attorney Andrew Lelling called it a "groundbreaking" decision for student due process rights. Students can learn from his approach of using document version history as proof of original authorship.

Sources & References

- Inside Higher Ed. "Adelphi Student Wins AI Plagiarism Lawsuit." insidehighered.com. Accessed April 14, 2026.

- CBS News. "Orion Newby sues over AI plagiarism accusation and wins." cbsnews.com. Accessed April 14, 2026.

- Yale Daily News. "SOM student sues Yale, alleges wrongful suspension over AI use." yaledailynews.com. Accessed April 14, 2026.

- Poets&Quants. "Judge Denies Injunction In Yale Student's AI-Linked Suspension." poetsandquants.com. Accessed April 14, 2026.

- MPR News. "'A death penalty': Ph.D. student says U of M expelled him over unfair AI allegation." mprnews.org. Accessed April 14, 2026.

- Plagiarism Today. "Student Sues University of Michigan Over AI Allegations." plagiarismtoday.com. Accessed April 14, 2026.

- Weber-Wulff, D., et al. "Testing of detection tools for AI-generated text." International Journal for Educational Integrity, 2023. springer.com.

- Liang, W., et al. "GPT detectors are biased against non-native English writers." Patterns, 2023. pmc.ncbi.nlm.nih.gov.

- CalMatters. "Turnitin helps colleges detect AI writing — but its cost is more than just a price tag." calmatters.org. Accessed April 14, 2026.

- Crowell & Moring. "Ivy League Lawsuit Centers on Alleged Impermissible Use of AI in Academia." crowell.com. Accessed April 14, 2026.

- Fisher Phillips. "Court Backs School in AI Cheating Case." fisherphillips.com. Accessed April 14, 2026.

- GradPilot. "What Universities Spend on AI Detection." gradpilot.com. Accessed April 14, 2026.

Last updated: April 14, 2026