The AI Humanizer Industry: How a $2.2 Billion Arms Race Emerged (2026) — The AI Humanizer Industry How a $2.2 Billion Arms Race Emerged ()

Key Takeaways

Table of Contents

1How Big Is the AI Humanizer Market?

The AI Humanizer Industry How a $2.2 Billion Arms Race Emerged () is making waves in the AI humanizer space. Understanding the AI humanizer industry requires stepping back to view the larger markets it inhabits. The AI humanizer tools exist primarily to evade detection systems, which means the humanizer market is fundamentally shaped by the size and growth trajectory of the detection market.

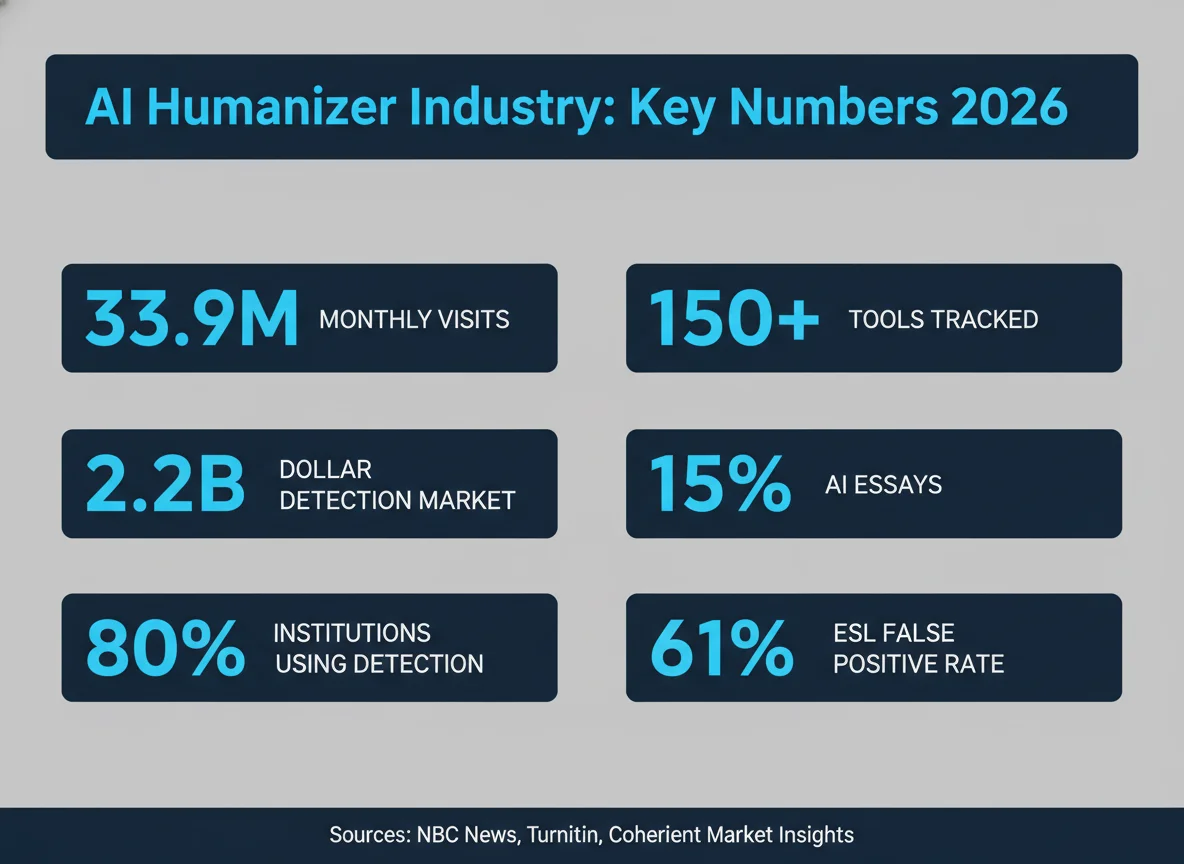

According to Coherent Market Insights data cross-verified by NovaOne Advisor and MarketsandMarkets, the AI content detection software market reached $2.20 billion in 2026. The market is not slowing—it’s accelerating. Projections estimate the market will reach $8.56 billion by 2033, representing a robust 21.6% compound annual growth rate. This growth is driven by widespread institutional adoption of detection tools across education, publishing, and enterprise sectors.

The upstream AI writing tool market—which humanizers directly address—follows a similar expansion trajectory. The AI writing tool market was valued at $392 million in 2022 and is projected to reach $1.4 billion by 2030, a 17.2% CAGR. Every new user in the writing tool market represents a potential customer for humanization services.

| Market Segment | 2022-2026 Status | Projection | CAGR |

|---|---|---|---|

| AI Detection Software | $2.20B (2026) | $8.56B (2033) | 21.6% |

| AI Writing Tools | $392M (2022) | $1.4B (2030) | 17.2% |

| Academic Integrity (Detection) | 36.6% of market | Core growth driver | — |

| North American Detection Market | 43.4% of global | Majority region | — |

The academic integrity segment comprises 36.6% of the AI content detection market in 2026, making universities the primary adoption driver. North America controls 43.4% of the global AI content detection market, positioning it as the epicenter of both detection tool development and humanizer innovation.

The AI content detection market’s valuation reflects the urgency institutions feel around AI-generated content. With over 80% of four-year US institutions now active on Turnitin’s AI detection module, the financial weight of institutional adoption justifies the billion-dollar detection market.

2150+ Humanizer Tools — and Counting

The sheer proliferation of humanizer tools underscores the market’s explosive growth. According to NBC News reporting on data from Turnitin, the detection vendor has catalogued over 150 AI humanizer tools, with pricing tiers ranging from free to $50 per month. This fragmentation reflects both the low barrier to entry for tool development and the persistent demand from users seeking to bypass detection.

Traffic data paints a striking picture: in October 2025, just 43 of the tracked humanizer tools received a combined 33.9 million website visits in a single month. This data, sourced from NBC News citing Joseph Thibault (Cursive founder), demonstrates that humanizer tools have achieved mainstream awareness and adoption at a scale comparable to many commercial SaaS platforms.

Pricing analysis from TheHumanizeAI.pro and multiple sources reveals that most humanizer tools cluster in the $8–$15 monthly range, with premium options like QuillBot Premium at $19.95/month. This pricing strategy makes humanizer subscriptions competitive with coffee subscriptions, lowering perceived risk for casual users while generating recurring revenue for tool operators.

To understand the competitive landscape, we’ve reviewed the major vendors in depth. Tools like WriteHuman, Undetectable AI, QuillBot, and HIX Bypass represent the mid-tier players with significant user bases. StealthGPT and BypassGPT target users seeking stealth-focused positioning, while TwainGPT and Phrasly focus on specific use cases like content marketing and academic writing.

The low-price entry point has been crucial to rapid adoption. Most users first try free or freemium versions before converting to paid tiers. This funnel approach explains how 43 tools managed to accumulate 33.9 million visits in a single month—they’ve effectively democratized the humanizer experience.

3Do AI Humanizers Actually Work?

The central question facing institutions and educators is straightforward: Do humanizer tools actually work? The answer is more nuanced than vendors’ marketing suggests.

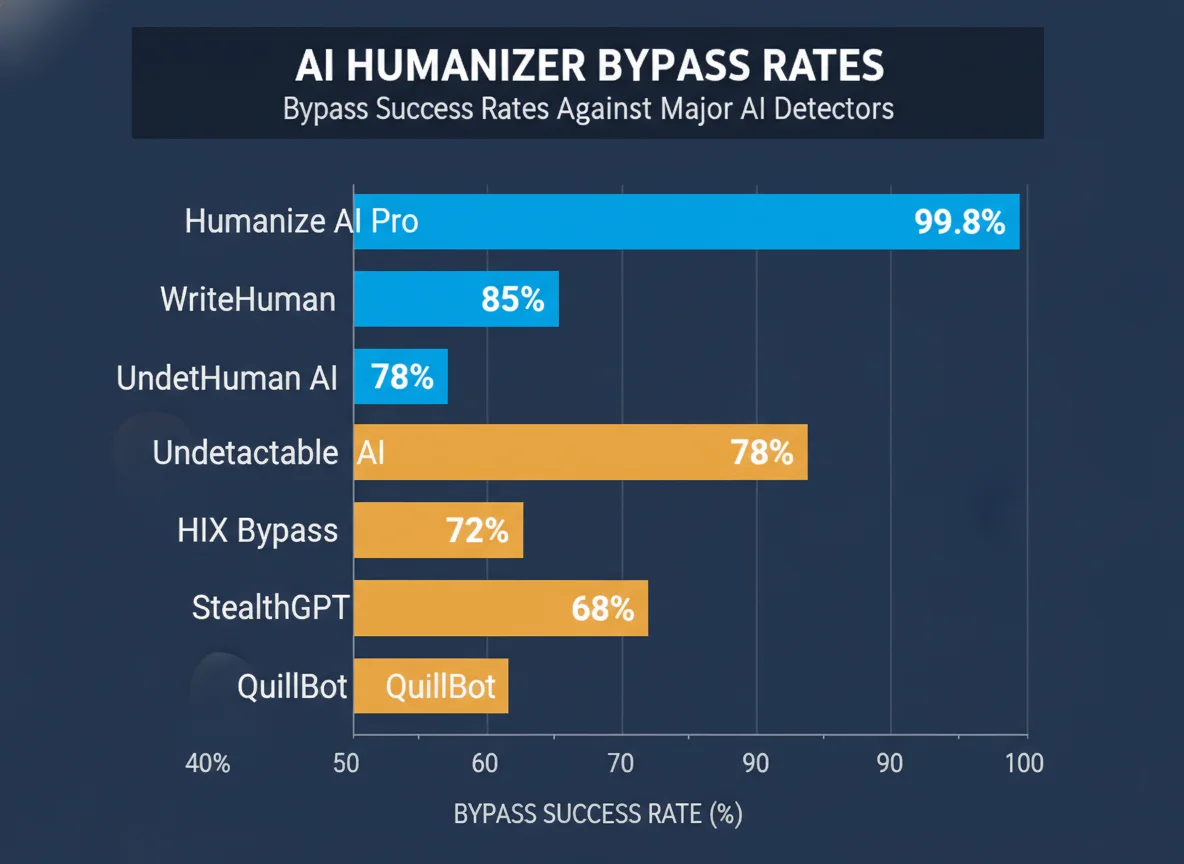

According to TheHumanizeAI.pro independent comparison (note: this is a vendor blog with inherent bias), bypass rates across major tools ranged from a low of 42% (QuillBot) to a high of 99.8% (Humanize AI Pro). Critically, only 3 tools exceeded 75% bypass success rates, meaning most humanizers fail to reliably evade detection on the first pass.

The more alarming finding comes from real-world testing by TextShift.blog: No major AI detector consistently identified AI text after three passes through a quality humanizer. Specifically, GPTZero’s detection rate fell to approximately 18% on humanized content, a dramatic decline from its typical 95%+ accuracy on raw AI output. This suggests an asymmetry in the arms race: detection is hard, but humanization followed by re-detection is harder.

A 2026 study from aidetectors.io added another data point: Claude 3.5 Sonnet outputs were the hardest to detect, with 24% of outputs evading all five detectors tested. By comparison, ChatGPT content evaded only 4% across the same test suite. This finding suggests that humanizer effectiveness depends heavily on which AI model generated the original content—Claude may require less post-processing to evade detection than ChatGPT.

The practical implication for institutions is sobering. Detection tools marketed as “bulletproof” rarely are, and even tested detectors struggle with humanized content. This is why normal writing habits can trigger AI flags while humanized AI content often slips through. Understanding this asymmetry is crucial for educators seeking to defend students accused of AI use, and why teachers need to understand what Turnitin AI percentages actually represent.

When GPTZero—one of the industry’s most widely deployed detectors—was tested on humanized AI content, its accuracy plummeted from 95%+ to 18%. This finding suggests that institutions relying solely on a single detector are exposed to significant false-negative risk. The arms race favors the humanizers on round two.

4The Detection Side: Who’s Buying and How Much?

The institutional adoption of AI detection tools has been swift and near-universal in the US higher education sector. According to GradPilot’s investigation, cross-verified by Turnitin’s official institutional count, adoption rates have surged dramatically in just two years:

In 2023, only 38% of four-year US institutions had activated Turnitin’s AI detection module. By 2024, that figure had jumped to 68%. By fall 2025, over 80% of four-year institutions had activated AI detection—a 110% absolute increase in just two years. This rapid rollout reflects institutional panic in response to increased AI assignment submissions and media coverage of the humanizer threat.

Turnitin’s scale is staggering. The company serves 16,000+ institutions globally, processes more than 200 million papers annually, and reaches approximately 71 million students across all geographies. With this scale, Turnitin functions as a de facto industry standard, making its pricing and detection capabilities critical infrastructure for global higher education.

However, institutional spending on Turnitin reveals stark inequality. California State University—a massive multi-campus system—spent $1.1 million on Turnitin in 2025 alone, with cumulative spending of $6 million+ since 2019. Yet per-student pricing varies wildly by institution, from $1.79 per student at CUNY to $6.50 per student at UC Irvine—a 360% pricing differential. This discrepancy suggests that Turnitin’s negotiating power varies based on system size and available alternatives.

Understanding these cost structures is critical for stakeholders. When students are flagged for AI on Turnitin or when institutions evaluate whether AI detection versus similarity checking are truly different, the financial stakes become clear. Institutions have invested heavily in these systems and are unlikely to abandon them despite criticism.

| Metric | 2023 | 2024 | 2025 | Source |

|---|---|---|---|---|

| 4-Year Institution Adoption | 38% | 68% | 80%+ | GradPilot / Turnitin |

| CSU Annual Spend | — | — | $1.1M | GradPilot |

| CSU Cumulative (2019–2025) | — | — | $6M+ | GradPilot |

| Per-Student Min Price | — | — | $1.79 | GradPilot (CUNY) |

| Per-Student Max Price | — | — | $6.50 | GradPilot (UC Irvine) |

| Academic Integrity Market Share | — | — | 36.6% | Coherent Market Insights |

The academic integrity segment comprises 36.6% of the broader AI content detection market, confirming that educational use cases dominate vendor strategy and pricing. Turnitin’s near-monopoly in this segment means that anti-humanizer capabilities become a critical selling point for institutional renewals.

The speed with which institutions activated AI detection—from 38% to 80% in just two years—represents one of the fastest technology rollouts in higher education history. This urgency suggests institutional leadership viewed AI assignment submissions as an existential threat requiring immediate response, regardless of detection accuracy debates.

5The Collateral Damage: False Positives and Student Backlash

The rapid adoption of AI detection has created a parallel crisis: false positives disproportionately targeting vulnerable student populations. The most damning evidence comes from a Stanford study showing that detectors flag 61% of essays by non-native English speakers as AI-generated when the essays are completely human-written. This finding exposes a fundamental algorithmic bias: detection systems trained primarily on native English speaker writing patterns fail catastrophically on legitimate ESL work.

The implications are severe. The ESL bias in AI detection means that international students and students from non-English-speaking backgrounds face disproportionate false-positive accusations. Combined with the reality that common writing tools like Grammarly can trigger AI flags, many students find themselves accused despite legitimate authorship.

Student backlash has been significant and organized. At University at Buffalo, 1,500+ students signed a petition calling for the removal of AI detection software entirely. Their complaint: innocent students were being flagged for AI use, with limited appeal mechanisms. This student activism reflects broader frustration with detection tool accuracy and institutional over-reliance on imperfect systems.

In response, 12 elite universities have since disabled Turnitin’s AI detection feature, according to GradPilot’s investigation. These institutions—likely responding to student pressure, faculty concerns, and recognition of false-positive problems—have essentially ceded this battleground. They’ve concluded that the reputational and student satisfaction costs of AI detection exceed the benefits of catching cheaters.

The alternative is equally troubling: 15% of essay submissions now contain >80% AI-generated content as of late 2025, up from just 3% in April 2023 when Turnitin first launched AI detection. This five-fold increase suggests that students have adapted to the detection threat through humanization and other bypass techniques, while institutions struggle to distinguish legitimate AI use from cheating.

One notable case study: universities that banned AI detectors have had to develop alternative academic integrity frameworks, often relying on process evidence, version history from Google Docs or Microsoft Word as proof of authorship, and instructor judgment rather than algorithmic flags. This shift marks a fundamental retreat from automated enforcement toward human-in-the-loop oversight.

The Stanford finding that detectors flag 61% of legitimate ESL essays as AI-generated represents a systemic failure of current detection technology. This bias disproportionately harms non-native English speakers and students from underrepresented backgrounds—the exact populations universities claim to support. The false-positive crisis has become one of the strongest arguments against relying on AI detection as a standalone enforcement mechanism.

6What Happens Next? The 2026-2030 Outlook

Projecting forward from 2026 is necessarily speculative, but the trajectory is clear: the detection-versus-humanization arms race will intensify, driving significant spending in both directions. The AI content detection market is projected to reach $8.56 billion by 2033—a 3.9x expansion from the current $2.2 billion market. This growth will inevitably attract new detection vendors and incentivize existing players to develop more sophisticated humanizer-detection capabilities.

However, the market is already showing signs of bifurcation. Institutions are diverging into two camps: those doubling down on technological enforcement, and those retreating to instructor-led academic integrity frameworks. The 12 universities that disabled AI detection represent the second camp—an implicit admission that detection technology’s false-positive rate and reputational costs exceed its cheating-deterrence value.

Platform switching is another trend to watch. In August 2023, the Utah Education Network shifted approximately 800,000 students from Turnitin to Copyleaks as a primary detection platform. This migration, reported by GradPilot, signals that institutions are diversifying detection vendors and reducing dependency on a single player. A Copyleaks-dominant ecosystem might behave differently than a Turnitin-dominated one, particularly around pricing, detection algorithm design, and vendor openness to appeals.

For students and institutions seeking clarity on this evolving landscape, critical resources include understanding StealthWriter and other humanizer alternatives, recognizing why Turnitin flags AI when other detectors don’t, and preparing for scenarios where institutions must respond to AI use accusations with robust authorship defenses.

The long-term equilibrium is uncertain. Will detectors eventually win through superior model training? Will humanizers maintain their edge by staying one step ahead of detection improvements? Or will institutions ultimately decide that the detection-humanization arms race is unwinnable and shift to process-based academic integrity frameworks? The answers will shape higher education’s relationship with AI for the next decade.

Interactive: AI Detection Cost Calculator

Estimate Your Institution’s Annual AI Detection Spending

Note: This calculator uses actual per-student pricing from institutional investigations by GradPilot. Actual costs may vary based on institutional negotiating power, contract terms, and volume discounts. Multi-year commitments often provide better per-student rates.