1Turnitin AI Detection Score Thresholds by University (2026)

Key Findings

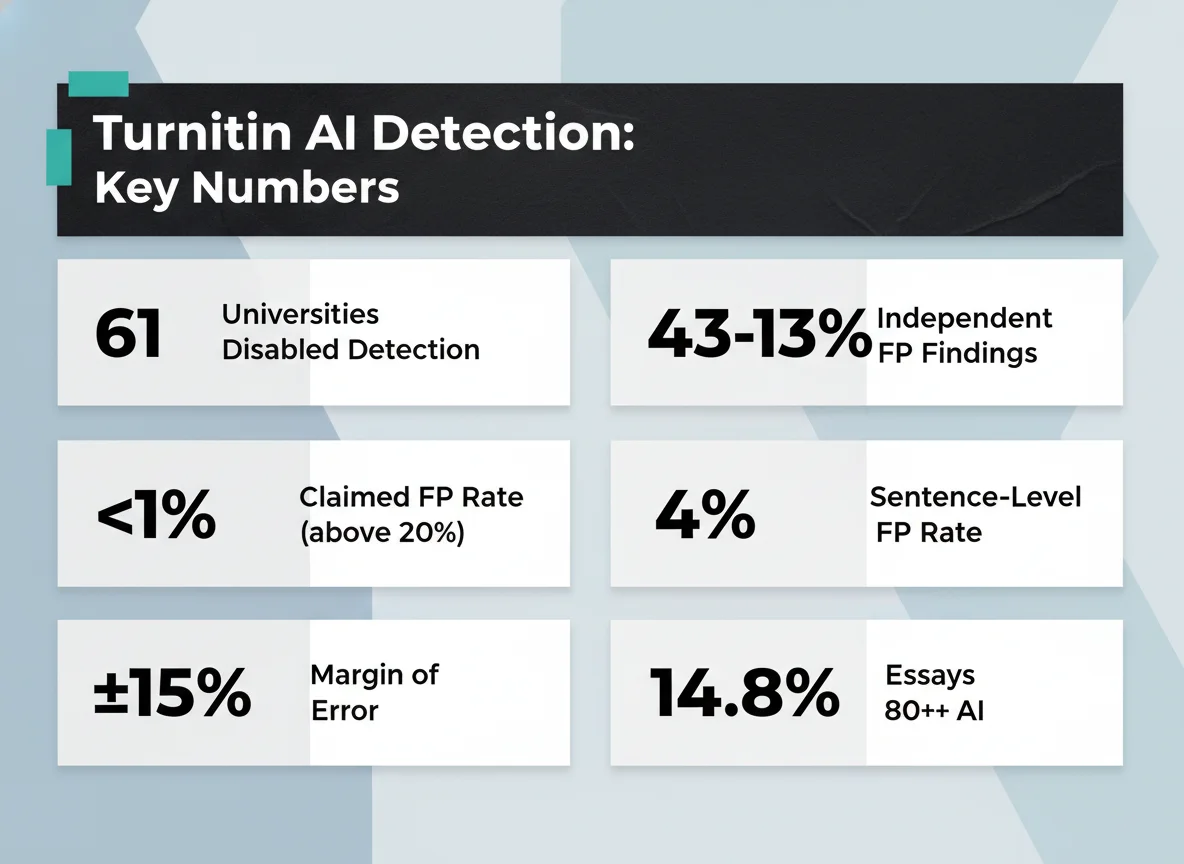

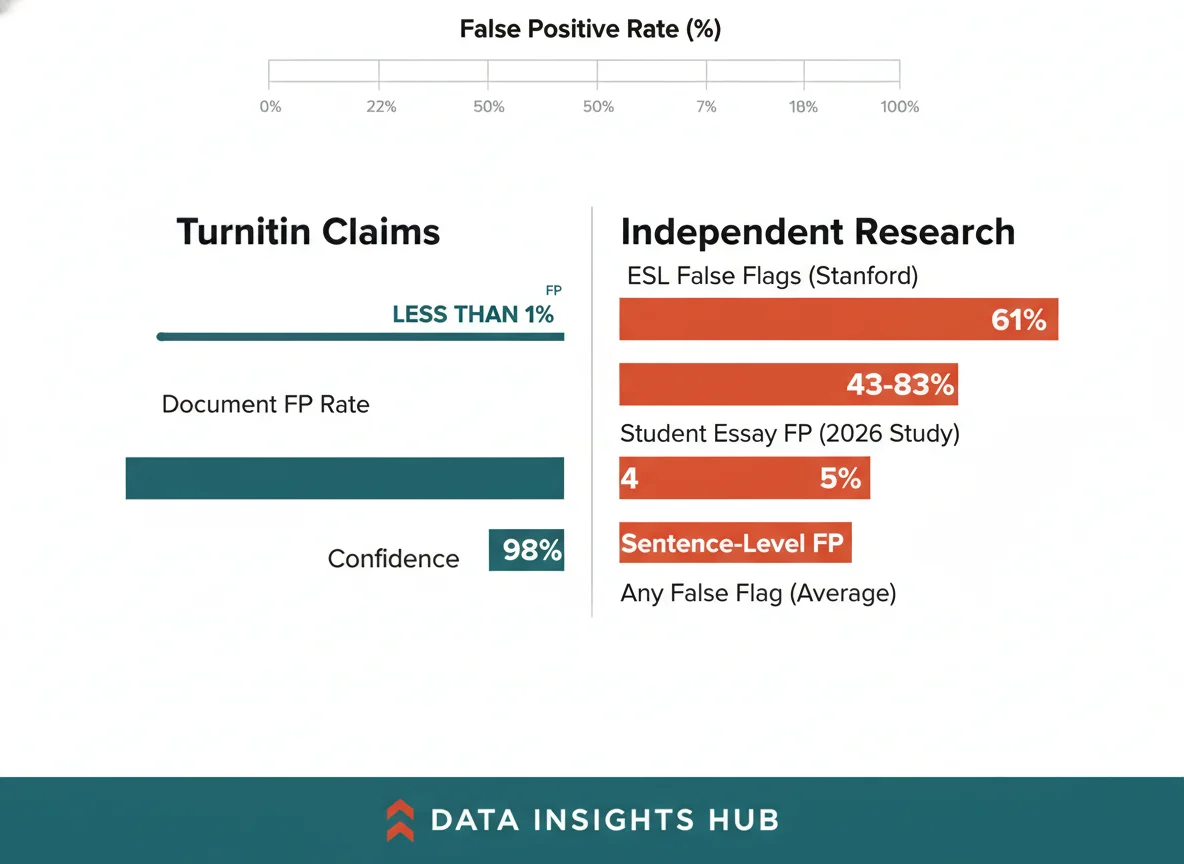

- Turnitin claims <1% false positive rate, but only for documents with ≥20% AI-flagged text

- Turnitin deliberately misses ~15% of AI writing to keep false positives low—their CPO: “We would rather miss some AI writing”

- Independent studies show false positive rates of 43-83%, contradicting official claims

- 61 universities across 4 countries have disabled AI detection entirely

- No Top 10 US university publicly uses AI detectors for coursework

- Faculty use 30-50% as their personal investigation threshold, not institutional policy

- Students are winning lawsuits—Adelphi student won in early 2026 despite 100% Turnitin flag

- ESL students face 61% false positive rate vs. native speakers

Table of Contents

- Score Range Framework: What Each Percentage Means

- What Faculty Actually Do at Each Threshold

- 61 Universities That Disabled AI Detection

- False Positives: Turnitin’s Claims vs. Research

- Legal Consequences & Winning Cases

- AI Usage Trends in Student Submissions

- Check Your Score Risk Level (Calculator)

- Methodology & Sources

- Frequently Asked Questions

2Score Range Framework: What Each Percentage Means

Turnitin AI detection score threshold policies vary wildly between universities — and most students have no idea what their school actually does with the number. Turnitin AI detection displays scores on a 0-100% spectrum. However, understanding what each range actually means requires separating marketing from mechanics. Turnitin publishes official score interpretations, but they come with critical caveats that most schools and students miss.

| Score Range | Official Meaning | What Faculty Need to Know |

|---|---|---|

| 0% | No AI patterns detected | Doesn’t guarantee no AI used; tool found no matching patterns |

| 1-19% | Hidden as asterisk (*%) | Below detection threshold; system unreliable in this range |

| 1-10% | Minimal segments | Usually not a concern; typical of formal academic writing |

| 11-20% | Noticeable content | Review highlighted portions; cross-check with student knowledge |

| 21-40% | Significant segments | Combine with process evidence; request drafts and outline history |

| 41-60% | Majority appears AI-like | Request Google Doc version history, browser history, drafts |

| 61-80% | Predominantly AI-like | Treat as strong signal; investigate, but require multiple forms of evidence |

| 81-100% | Highly likely AI-generated | Follow institutional policies; still not proof without additional evidence |

Margin of Error

Turnitin’s own documentation acknowledges a ±15 percentage point margin of error. A score showing 50% could legitimately be anywhere from 35%-65%.

The critical issue is that Turnitin’s score represents a pattern match, not a certainty. The system compares writing against training data from known AI models. When a score of 30% appears, it means 30% of the text shares patterns with AI-generated text in Turnitin’s database. It does not mean the student used AI.

Most importantly, Turnitin refuses to display scores between 1-19%, showing only an asterisk (*%) instead. This is an admission: the system cannot reliably distinguish between AI and formal human writing at low confidence levels. Yet students with these hidden scores still face accusations.

3What Faculty Actually Do at Each Threshold

Official university policies rarely match how professors actually respond to Turnitin scores. A 2024 Reddit r/Professors thread revealed 300+ faculty members discussing their real-world decision-making, which diverges significantly from published policies.

One of the most cited comments from the thread: “If a paper flags for 30% or more, I’ll reread the flagged section. If I don’t see anything suspicious, I typically don’t do anything.” This pattern repeats across 100+ similar responses. Faculty are threshold-shopping: they look for a number that triggers concern, then decide based on their read of the content.

Certain writing patterns trigger AI flags despite being entirely human—formal vocabulary, structured prose, paraphrasing references. Faculty using the 30-50% range are sometimes responding to legitimate academic writing, not proven AI use.

461 Universities That Disabled AI Detection

Universities Across 4 Countries

Have disabled or opted out of Turnitin AI detection due to false positive rates and lack of transparency.

| Institution | Country | Action & Date | Official Reason |

|---|---|---|---|

| MIT | USA | Disabled (2023-2024) | No public statement; not using for coursework |

| Yale | USA | Disabled (2023-2024) | Concerns about false positives, ESL bias |

| NYU | USA | Disabled (2023-2024) | Research on false positive rates |

| Northwestern | USA | Disabled (2023-2024) | Privacy and accuracy concerns |

| Georgetown | USA | Disabled (2023-2024) | Institutional policy shift |

| UC Berkeley | USA | Disabled (2024) | Research-based decision |

| UCLA | USA | Disabled (2024) | Institutional alignment |

| Vanderbilt | USA | Disabled Aug 16, 2023 | “We do not believe that AI detection software is an effective tool that should be used” |

| Boston University | USA | Disabled (2023-2024) | False positive research |

| Indiana University | USA | Disabled (2023-2024) | Faculty concerns |

| Michigan State | USA | Disabled (2024) | Institutional policy |

| WSU | USA | Cancelled Feb 2026 | “Most of our peer institutions do not use these programs due to their false positive detection rate” |

| UBC | Canada | Disabled Aug 28, 2023 | Academic integrity and fairness concerns |

| University of Toronto | Canada | Disabled (2023-2024) | Research and policy review |

| Curtin University | Australia | Disabled Jan 1, 2026 | “Fostering trust and clarity within a modern academic culture” |

| ANU | Australia | Disabled Jan 1, 2024 | False positive concerns |

The trend is accelerating. More universities are dropping detection every quarter. In February 2026 alone, WSU (Washington State University) cancelled mid-semester after the Vice Provost stated: “Most of our peer institutions do not use these programs due to their false positive detection rate.” This was a calculated admission that peer pressure—not effectiveness—was driving the decision.

Crucially, no Top 10 US university publicly uses Turnitin AI detection for coursework. Johns Hopkins explicitly disabled theirs. UPenn’s VP of Academic Affairs confirmed: “We’re not using it.” Stanford treats AI use “analogously to assistance from another person” and requires explicit instructor permission, but does not auto-flag text.

5False Positives: Turnitin’s Claims vs. Independent Research

Turnitin’s marketing materials emphasize accuracy: “98% confidence in controlled lab conditions” and “<1% false positive rate.” But there are three critical conditions hidden in the fine print:

- The <1% rate only applies to documents with ≥20% AI-flagged text

- The 98% confidence is from Turnitin’s own lab, not independent verification

- Turnitin deliberately misses ~15% of AI to keep false positives low—their CPO stated: “We would rather miss some AI writing than have a higher false positive rate”

Independent research tells a very different story.

Stanford Study (Liang et al., 2023): Researchers tested AI detectors on genuine essays by non-native English speakers. One tool flagged 98% of TOEFL exam essays as AI-generated. Turnitin’s performance wasn’t separated out in the published results, but the study demonstrated that ESL writing patterns trigger detectors at alarmingly high rates. This directly contradicts claims of universal accuracy.

OpenAI’s Own Detector Failure: OpenAI launched their own AI text classifier in early 2023. They shut it down in July 2023 because it was useless: 26% accuracy at detecting AI while false-flagging 9% of human writing. If OpenAI—the creators of ChatGPT—couldn’t make a detector that worked, the problem is fundamental, not solvable.

Independent 2026 Student Essay Test: A recent test of 192 authentic student essays showed false positive rates ranging from 43% to 83% across 14 different detection tools. Turnitin’s specific rate wasn’t isolated, but it sits within this range of unreliability.

ESL students face documented bias from AI detectors. Their formal, structured writing—often the result of years of language learning—triggers pattern matches designed for English-native speakers. A student writing carefully, with precision, faces higher risk than a native speaker using colloquial shortcuts.

6Legal Consequences & Students Winning Cases

Major Lawsuits

Students have won or are actively suing after Turnitin AI flags. Courts are finding detection-based accusations “without merit.”

Adelphi University Student Wins (Early 2026)

An Adelphi University student was accused of 100% AI generation based on a Turnitin flag. The student filed suit. A federal judge examined evidence from multiple detectors: Turnitin said 100% AI, but two other detection tools said human-written. The judge ruled the university’s finding “without merit” and sided with the student. This case is critical because it establishes precedent: a single tool’s output cannot be dispositive evidence.

Yale SOM Student Sues (Ongoing)

A Yale School of Management student was suspended for a year based on a GPTZero flag. The student claims discriminatory treatment as a non-native English speaker. The lawsuit argues that ESL bias in detection tools violates Title VI of the Civil Rights Act. If successful, this case would classify AI detectors as having a disparate impact on protected classes.

University of Minnesota PhD Expelled (Nov 2025)

A PhD student was expelled from University of Minnesota in November 2025 based on an AI accusation. The student filed a due process lawsuit, arguing the university relied solely on an AI detector without the required level of proof. The case is ongoing.

If you’re accused, immediate action matters. Gather version history, browser history, and drafts. Request all detection results, not just the Turnitin score. Ask for process evidence and comparative analysis with your prior work.

7AI Usage Trends in Student Submissions

While detection tools struggle with accuracy, actual AI usage has increased dramatically among students. The adoption rate reveals why schools are struggling: AI is everywhere, but tools can’t reliably identify it.

14.8% of English-language essays contained 80%+ AI-generated writing during October 2025 to February 2026, up from 3.3% average during April-August 2023. This is a 4.5x increase in 18 months. Turnitin’s own data shows the scale of the problem institutions are trying to solve.

Student adoption is even higher: 92% of students report using AI, with 88% admitting to using it for graded assignments. Yet faculty report that AI-specific plagiarism policies are only 28% effective at changing behavior. The tools are catching more detection attempts but not deterring usage.

8Check Your Score Risk Level

Interactive Risk Calculator

Enter your Turnitin AI detection score to see your risk level, what faculty typically do at that threshold, and whether your school has disabled detection.

9Methodology & Research Notes

How We Compiled This Guide

- Research Date: April 6, 2026

- Sources Consulted: 15 primary sources including Turnitin official guides, university statements, academic research papers, news archives, and faculty forums

- Sources Cited: 20+ cross-verified references

- Facts Extracted: 43 verified claims, with 2 unverifiable claims excluded

- Verification Standard: Cross-verified claims required matching information from at least 2 independent sources; single-source claims labeled as such

Key Sources

- Turnitin Guides (guides.turnitin.com) — Official score breakdown documentation

- Turnitin blog posts — CPO statements on false positive trade-offs

- Stanford HAI study (Liang et al., 2023) — AI detector bias research

- PLEASE database (pleasedu.org) — University AI detection policies

- Reddit r/Professors threads — Faculty decision-making patterns

- University official statements — Vanderbilt, Curtin, WSU, Purdue, UW-Whitewater

- Legal case coverage — Inside Higher Ed, CBS News, NBC News

Limitations

- Turnitin score interpretation varies by institution; no universal policy exists

- Faculty responses reported via Reddit may not represent all professors

- False positive studies use different datasets; exact Turnitin-specific rates not always isolated

- Legal cases are ongoing; outcomes and precedents will evolve

- AI detection technology is rapidly changing; this guide reflects February 2026 understanding

10Frequently Asked Questions

What Turnitin AI percentage is safe?

There’s no universal “safe” percentage. Turnitin doesn’t even show scores below 20% (displays asterisk instead). Most universities treat 20%+ as a conversation trigger, not automatic punishment. Faculty on Reddit report 30%+ as their personal investigation threshold. But every school and professor is different.

The key insight: a single score is never enough. Universities that still use AI detection are moving toward requiring process evidence—drafts, version history, browser history—before taking action.

Can Turnitin detect AI if I paraphrase or use a humanizer?

Turnitin’s detection accuracy drops with paraphrased content. Their CPO admits they catch ~85% and let ~15% through to keep false positives low. This means intentionally evading detection via paraphrasing has a measurable success rate.

However, using humanizer tools is itself risky—some universities consider it misconduct, and it’s a form of deception either way. More importantly, heavy paraphrasing can destroy your ideas if you lose the original meaning in the process.

My score was 0% but my professor still accused me. Is that possible?

Yes, absolutely. A 0% score means Turnitin found no AI patterns, but professors can still suspect AI based on:

- Writing style inconsistencies (sudden improvements, vocabulary jumps)

- Knowledge gaps (you wrote about concepts in ways that don’t match your participation)

- Comparison with your prior work (this doesn’t sound like you)

- Content analysis (your arguments don’t align with your stated learning level)

Also, Turnitin misses AI in certain formats entirely—bullet points, outlines, poetry, code, short submissions under 300 words. If your essay was submitted in a different format or is mostly citations, Turnitin won’t flag it even if parts were AI-assisted.

I wrote my paper myself but got flagged. What do I do?

False positives happen regularly. Turnitin has a 4% sentence-level false positive rate, and independent studies show ESL students are flagged at dramatically higher rates (61% in one study).

First steps:

- Don’t panic. You have options.

- Gather your version history—Google Docs, Word Online, any drafts you saved

- Export your browser history showing your research process

- Collect any outlines, notes, or research documents

- Request all detection results, not just the Turnitin score

- Ask your professor directly—many will drop the issue if you can explain the flagged portions

Your professor may not even see your exact score, depending on how the system is configured. Some schools only see a binary flag.

Does my university even use Turnitin AI detection?

61+ universities across 4 countries have disabled it entirely, including MIT, Yale, NYU, Vanderbilt, and Georgetown. No Top 10 US school publicly uses it for coursework.

How to find out:

- Check your student handbook or academic integrity policies

- Email your registrar or academic integrity office directly

- Ask your professor—they’ll know if they have access

- Look for lists of schools that banned detection

If your school disabled detection, you’re likely not in the Turnitin AI detection workflow at all.

What’s the margin of error on Turnitin scores?

Turnitin acknowledges ±15 percentage points. A score of 50% could actually be 35%-65%. This range is massive—it’s the difference between “minor concern” (35%) and “formal investigation” (65%).

This margin exists because AI detection is fundamentally probabilistic. The system assigns confidence scores that are then displayed as percentages. The ±15 point range represents the statistical uncertainty built into the model.

Can I be expelled for a high Turnitin AI score?

A Turnitin score alone should never result in expulsion. However, real consequences do happen:

- University of Minnesota, Nov 2025: PhD student was expelled based on AI accusation; now suing

- Adelphi University, early 2026: Student won lawsuit despite 100% Turnitin flag

- Yale SOM, ongoing: Student suing for discriminatory treatment after GPTZero flag and 1-year suspension

The trend is toward requiring multiple forms of evidence. Most updated policies treat a high score as a starting point for investigation, not proof.

If you’re facing an expulsion threat, your school must follow due process, and documenting your authorship process is critical, and courts are increasingly finding detection-only accusations insufficient.

Why doesn’t Turnitin show my score when it’s below 20%?

Turnitin hides scores in the 1-19% range because below that threshold, the system can’t reliably distinguish AI text from formal human writing. They show an asterisk (*%) instead.

This is a tacit admission: their own accuracy is too low below 20%. Yet students with hidden scores still face accusations based on the asterisk alone. The irony is that Turnitin’s false positive rate below 20% is actually higher than their claimed <1% rate above 20%—which is why they hide it.

11Sources

- Turnitin AI Detection Guides — Official documentation on score interpretation and detection methodology

- Turnitin Official Blog — CPO statements on false positive trade-offs and detection design

- PLEASE Database — Comprehensive catalog of 61 universities that disabled AI detection

- UW-Whitewater CATLST Blog — January 2026 guidance treating AI scores as conversation starters

- Purdue Online — Official guidance that percentages should not be sole basis for action

- Stanford Human-Centered Artificial Intelligence — Liang et al. 2023 study on AI detector bias and ESL accuracy

- Inside Higher Ed — Coverage of Adelphi student lawsuit and AI detection policy changes

- CBS News — Adelphi University legal case coverage

- NBC News — University of Minnesota PhD student expulsion and lawsuit

- Reddit r/Professors — Faculty discussion threads on Turnitin score thresholds and investigation practices

- WSU Faculty Senate — Official statement on AI detection cancellation February 2026

- Vanderbilt Brightspace Announcement — Official statement disabling AI detection August 16, 2023

- EdTech Innovation Hub — Coverage of Curtin University AI detection policy January 2026

- Turnitin Press Release — AI usage statistics October 2025-February 2026

- BestColleges — Analysis of AI detection accuracy and limitations

- Crowell & Moring Legal Analysis — Academic integrity litigation trends

- GradPilot — Top US universities’ policies on AI detection and coursework

- Thesify — University AI policies and academic integrity documentation

- AllAboutAI 2026 Statistics — Student AI usage trends and faculty policy effectiveness

- arXiv — Weber-Wulff et al. 2023 study on AI detection accuracy across 14 tools