AI in College Admissions Essays: 2026 Statistics, Detection Data, and Application Impact

high school seniors used AI to help write their college admissions essays in 2023-24

Source: foundry10, Navigating College Applications with AI, 2024

Three years after ChatGPT became publicly available, generative AI has quietly become a fixture of the college application. Roughly a third of high school seniors now reach for a chatbot at some point while crafting their personal statements. Half use AI to brainstorm. One in five uses it to draft. And almost two-thirds of the colleges receiving those essays still have no formal policy telling applicants what is — or isn’t — allowed.

This report compiles the verified statistics on AI use in college admissions essays as of May 2026, drawing on the foundry10 white paper, the Kaplan 2025 admissions officer survey, a 2026 Cornell/Carnegie Mellon study of tens of thousands of real essays, and a BestColleges survey of 1,000 current undergraduates. The picture that emerges is messy: high adoption, low policy clarity, deep equity concerns, and detection tools that are still wildly unreliable on the kind of writing that actually appears in admissions files. For context on how the broader detection landscape evolved, see our 2026 AI Detection Industry Report.

Key takeaways

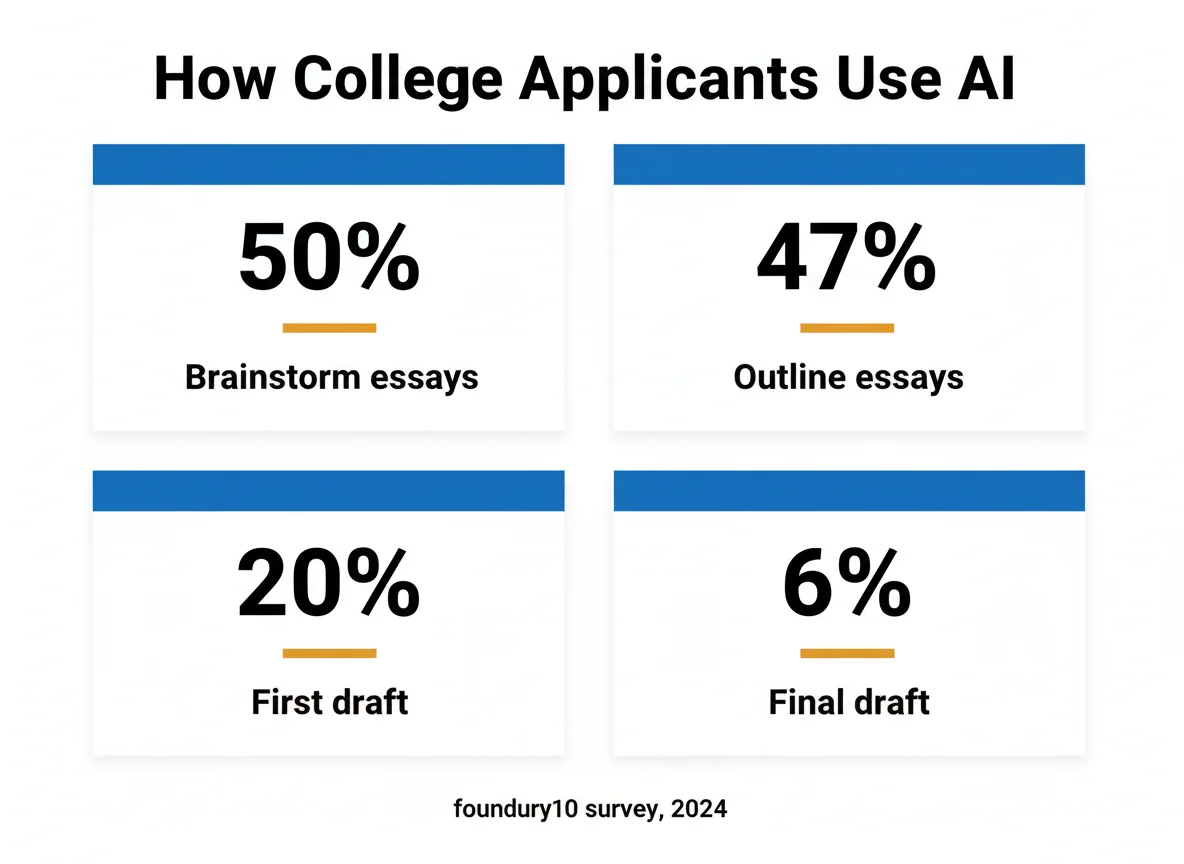

- 50% of applicants use AI to brainstorm their admissions essay (foundry10)

- 20% use AI to draft a first version of the essay (foundry10)

- 6% rely on AI for the final draft they submit (foundry10)

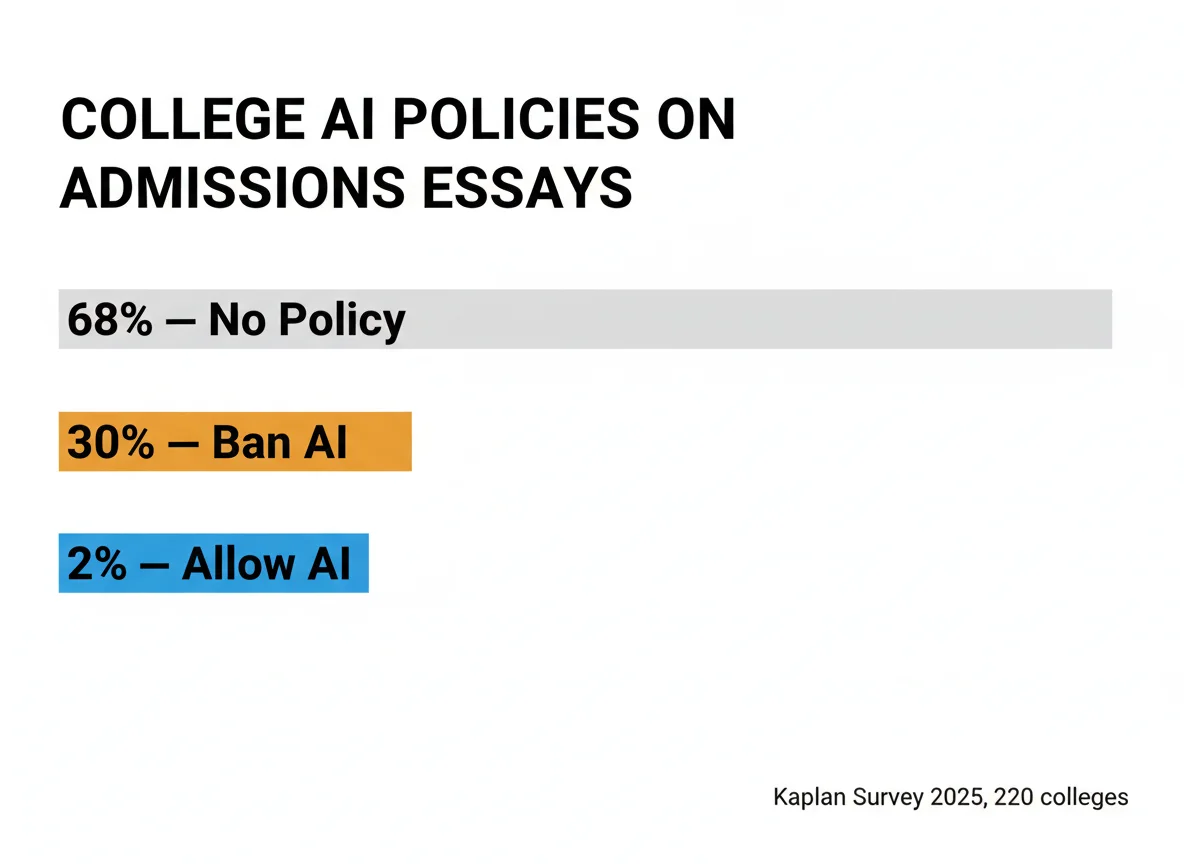

- 68% of colleges still have no formal AI policy on essays (Kaplan, 2025)

- 30% of colleges officially ban AI in admissions essays (Kaplan, 2025)

- 2% of colleges explicitly permit AI essay drafting (Kaplan, 2025)

- 50% of admissions officers view AI essay use unfavorably (Kaplan, 2025)

- 61% of TOEFL essays by non-native English speakers were misclassified as AI in a Stanford study

What’s in this report

- How many students actually use AI on their essays

- What they use it for (and what they don’t)

- College policies: the 68% policy void

- Admissions officers’ attitudes

- Detection accuracy and false positives

- The equity problem: who gets penalized

- Interactive: estimate your essay’s flag risk

- Frequently asked questions

How Many Students Use AI to Write Their Admissions Essays

Adoption Data

foundry10’s Navigating College Applications with AI remains the most rigorous public dataset on student adoption. Among graduating seniors who applied to college in 2023-24, roughly 33% reported using a generative AI tool — most often ChatGPT — at some stage of their essay work. Sixty-five percent said they used AI rarely or not at all for school in general, but nearly a third of all surveyed students still reached for it specifically when working on college applications. That gap is telling: students who avoid AI for homework still treat the college essay as worth the help.

| Cohort | Used AI on essay | Source |

|---|---|---|

| All 2023-24 applicants | ~33% | foundry10 (2024) |

| Current undergrads who say they would have used it | 47% | BestColleges (Oct 2023, n=1,000) |

| First-generation college students (would-have) | 58% | BestColleges (2023) |

| Non-first-generation students (would-have) | 33% | BestColleges (2023) |

| Avoided AI for fear of cheating accusation | 39% | foundry10 (2024) |

The 39% who avoided AI specifically because they worried it would count as cheating is the single most underreported finding in the foundry10 dataset. It explains why AI detection anxiety statistics spike sharply during application season — applicants are making suppression decisions on their own writing not because they did anything wrong, but because they fear being misread.

What Applicants Actually Use AI For

Use Cases

The drop-off between “brainstorm” and “final draft” is the most important statistic in this entire report. It tells us that the dominant pattern is not students cheating with ChatGPT — it’s students using it the way they’d use a thoughtful older sibling: someone to talk through ideas with, get an outline from, and clean up grammar at the end. Whether colleges consider that cheating is a separate, unresolved question that the 68% policy void in the next section makes worse.

Teachers, notably, mirror this same pattern in their own application-related work. About 31% of teachers reported using generative AI to help craft letters of recommendation in the same foundry10 cycle. The norms students are absorbing aren’t just emerging in dorm rooms — they’re being modeled by the adults writing the supporting materials. This dovetails with our analysis of professors using ChatGPT statistics, which shows even higher AI adoption rates among instructors than among students.

The 68% Policy Void: What Colleges Actually Say (or Don’t)

Policy Data

This is the dataset that makes the entire applicant experience confusing. Kaplan polled 220 of the U.S. News & World Report-ranked colleges between July and August 2025. The result is a policy landscape where the modal college simply hasn’t decided yet. For applicants this means there is no single rule to follow — the answer depends on which 50 to 100 schools an individual student is applying to, and most of those schools haven’t published guidance.

When colleges do publish policies, the line is usually drawn between “ideation” (allowed) and “generation” (not). Brainstorming, outlining, and line-edit grammar are widely permitted. AI-generated paragraphs in the final submission are not. The Common App’s 2024 disclosure language reflects this consensus by asking applicants to certify the essay represents their own work. Schools that explicitly banned external AI assistance during essay drafting are a small but growing cluster — a different pattern from the universities we documented in our piece on universities that banned AI detectors after high false-positive rates began producing wrongful accusations.

What Admissions Officers Actually Think

Officer Attitudes

The Kaplan attitude breakdown is the single best signal of how flagged or stylistically anomalous essays get read inside admissions offices. Half the room is already predisposed against AI-assisted writing. Only a small minority sees it positively, and most of those treat it as benign brainstorming, not generated prose. Combined with the policy void above, this means that an applicant whose essay reads AI-generated faces a coin flip: half the readers will treat it as a strike against authenticity, even where no formal rule has been violated.

Separately, an Inside Higher Ed survey from September 2023 found that ~80% of colleges expected to use AI somewhere in admissions during the 2024 cycle, with about 60% of officers reporting AI was being used to review personal essays. That doesn’t mean every essay runs through a detector — far from it — but it confirms that the technology is now on both sides of the application. For schools investing in detection software, see our breakdown of AI detection tool spending at universities in 2026.

Detection Accuracy: Why Flagged ≠ Cheated

Detection Data

This is the section every applicant should read. The detector tools that schools and counselors casually invoke are not calibrated for short, stylized personal essays written by 17-year-olds — they’re trained primarily on academic prose. Two well-documented failure modes appear repeatedly in published research: false positives on highly polished writing and systemic bias against non-native English speakers. For a complete breakdown of how Turnitin’s own thresholds work, see our piece on the Turnitin AI detection score threshold.

| Detection failure mode | Approximate rate | Source |

|---|---|---|

| Turnitin false-positive rate (per sentence) | ~4% | Turnitin (vendor-reported) |

| Mislabeling rate on TOEFL essays (non-native English) | 61% | Stanford / Liang et al., 2023 |

| Common detector false-flag rate on highly edited human prose | 9–15% | Multiple independent benchmarks |

| Applicants who avoided AI specifically to dodge a flag | 39% | foundry10 (2024) |

Even within the broader Turnitin ecosystem, the company itself has acknowledged that essays under 300 words trigger more unreliable scoring. Most Common App supplements fall in that danger zone. Combined with the documented ESL bias in AI detection, this means applicants who write in second-language English or who are heavily edited by counselors face elevated false-positive risk. If you’ve already been flagged, our checklist on defending against Turnitin AI false positives walks through the evidence to assemble. And if the flag came after submission, the first 24 hours matter — see what to do in the next 24 hours after an AI accusation.

The Equity Problem: Who Gets Penalized

Equity Findings

This is the finding that has begun reshaping how admissions researchers talk about AI. The Cornell/Carnegie Mellon paper (lead author Jinsook Lee, Cornell) analyzed tens of thousands of essays submitted to an unnamed selective institution over four years, beginning before ChatGPT’s late-2022 release. Two patterns emerged. First, fee-waived applicants — the proxy for lower-income status — increased their AI use faster than any other group. Second, AI-assisted writing was correlated with lower predicted admission probabilities, and that penalty fell hardest on the same lower-income applicants.

The paper’s authors also documented a striking linguistic homogenization trend: essays at the selective college converged toward similar phrasings, openings, and rhetorical structures after AI tools went mainstream. The convergence was greatest among rejected applicants and among lower-income applicants. Read alongside the broader landscape of AI cheating consequences statistics, the picture is clear: AI penalties land unevenly, and the equity story is the part of the admissions-AI debate moving fastest in 2026.

Interactive: Estimate Your Essay’s Flag Risk

Interactive Tool

Quick essay flag-risk estimator

Methodology & Limitations

How we built this report

All statistics in this report are sourced from named, publicly available studies, surveys, or vendor-published documentation. Each finding is tagged with one of four verification levels: verified (single named source with primary data), cross-verified (two or more independent sources report the same finding), single-source (only one published source available), and commonly-claimed (vendor-reported figures we surface for context but cannot independently audit).

The foundry10 white paper sampled high school seniors who applied during the 2023-24 cycle. The Kaplan 2025 survey polled 220 admissions offices at U.S. News & World Report-ranked colleges between July and August 2025. The BestColleges survey polled 1,000 current undergraduate and graduate students in October 2023. The Cornell/Carnegie Mellon study (May 2026) analyzed tens of thousands of essays at one unnamed selective institution across four admissions cycles. None of these datasets represent the full U.S. applicant population, and findings should be read as directional rather than census-grade.

Frequently Asked Questions

How many students use AI to write their college admissions essays?

Do colleges check admissions essays for AI?

Will my essay be flagged if I used ChatGPT to brainstorm?

Are AI detectors accurate on admissions essays?

Do students who use AI in their essays get admitted at lower rates?

What is the safest way to use AI on a college essay?

Sources & references

- foundry10. Navigating College Applications with AI white paper. foundry10.org/resources/navigating-college-applications-with-ai

- Inside Higher Ed (Johanna Alonso). “Low-Income Students More Likely to Submit AI-Generated Admissions Essays.” May 8, 2026. insidehighered.com/news/admissions

- Kaplan. “Colleges Increasingly Clarify Rules on GenAI Use in Admissions Essays.” 2025 survey of 220 admissions offices. kaplan.com

- Lee, J., Alvero, AJ et al. Cornell/Carnegie Mellon working paper, May 2026. Coverage at Inside Higher Ed

- BestColleges. “Half of College Students Would Have Used AI on Admissions Essay.” October 2023 (n=1,000). bestcolleges.com/research

- Liang, W. et al. “GPT detectors are biased against non-native English writers.” Stanford HAI, 2023. hai.stanford.edu

- Pangram Labs. “Do College Admissions Offices Check for AI?” 2025. pangram.com/blog

- Hechinger Report. “Students who want to try AI in college admission essays should use it for brainstorming.” 2024. hechingerreport.org