Professors Using ChatGPT: 2026 Statistics, Spend Data, and the Hypocrisy Debate

Key takeaways

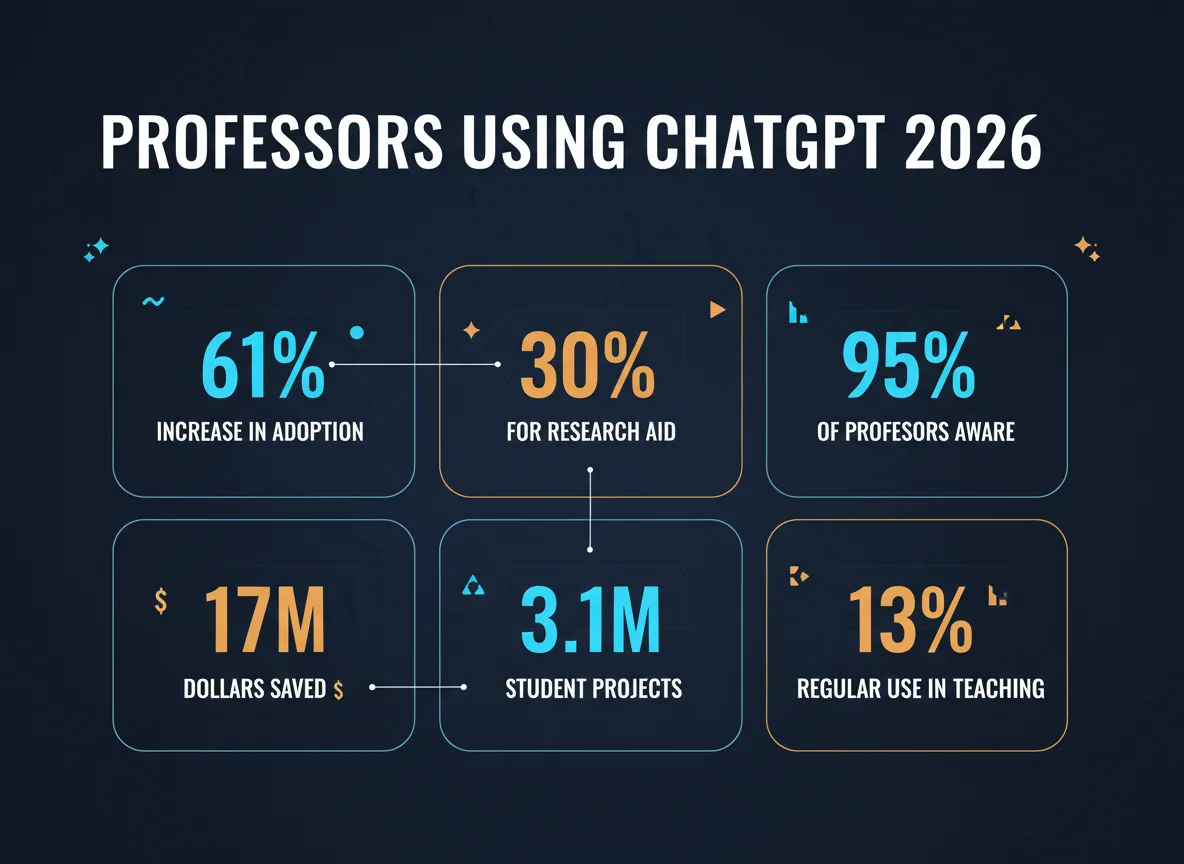

61%of faculty have used AI in their teaching (Digital Education Council 2025)30%of college instructors use AI daily or weekly, up from4%in spring 2023 (Tyton Partners / NPR)40%of higher-ed administrators use AI daily or weekly — faster adoption than instructors$17M— size of Cal State’s January 2025 OpenAI contract for unlimited ChatGPT Edu across 22 campuses$3.1M— USC’s annual ChatGPT Edu subscription, signed during a ~$250M budget deficit52%of Cal State faculty say AI has had a negative effect on their teaching (94K-respondent system survey)95%of faculty fear GenAI will increase student overreliance on AI (Elon University / AAC&U)$8,000— tuition refund demanded by a Northeastern senior after catching her professor using ChatGPT- Only

3%of AI-using teachers admit to using AI on high-stakes grading

What’s in this report

- Faculty adoption: how many professors actually use ChatGPT

- From experiment to habit: daily and weekly usage

- Institutional spending: $17M, $3.1M, $1.5M deals

- What professors actually do with ChatGPT

- The hypocrisy problem: students refunding tuition

- Faculty perception: 95% fear, 52% see harm

- Calculator: your school’s potential ChatGPT spend

- Methodology & sources

- FAQ

How many professors are actually using ChatGPT in 2026?

| Survey | Population | Headline metric | Year |

|---|---|---|---|

| Digital Education Council Global AI Faculty Survey | ~1,800 faculty (multi-country) | 61% have used AI in teaching | 2025 |

| Tyton Partners higher-ed survey (via NPR) | 1,800+ US higher-ed staff | 30% of instructors use AI daily/weekly (vs 4% in 2023) | Oct 2025 |

| Elon University / AAC&U faculty AI survey | 1,057 faculty (US) | 95% fear student overreliance on AI | Jan 2026 |

| California State University systemwide survey | 94,000+ students/faculty/staff | 52% of faculty say AI has had a negative effect on teaching | 2026 |

| Walton/Impact Research awareness study | National K-12/HE sample | 82% of college professors aware of ChatGPT (vs 55% K-12) | 2024-25 |

The headline is the gap between adoption (61%) and depth (only 12% of those who try AI use it more than minimally). Awareness has outrun integration by roughly a generation of faculty turnover.

The 61% number sounds like saturation, but it papers over an enormous variance. Tenure-track faculty in STEM departments are far more likely to use AI weekly than humanities adjuncts, and institutional access varies wildly — the same instructor at a school with seven-figure AI contracts has different incentives than one paying $20/month out of pocket. The Cal State system’s $17M deal alone covers more than 460,000 students, while many regional comprehensives still have no institutional AI subscription at all. That spending gap is reshaping what “faculty AI use” even means in 2026.

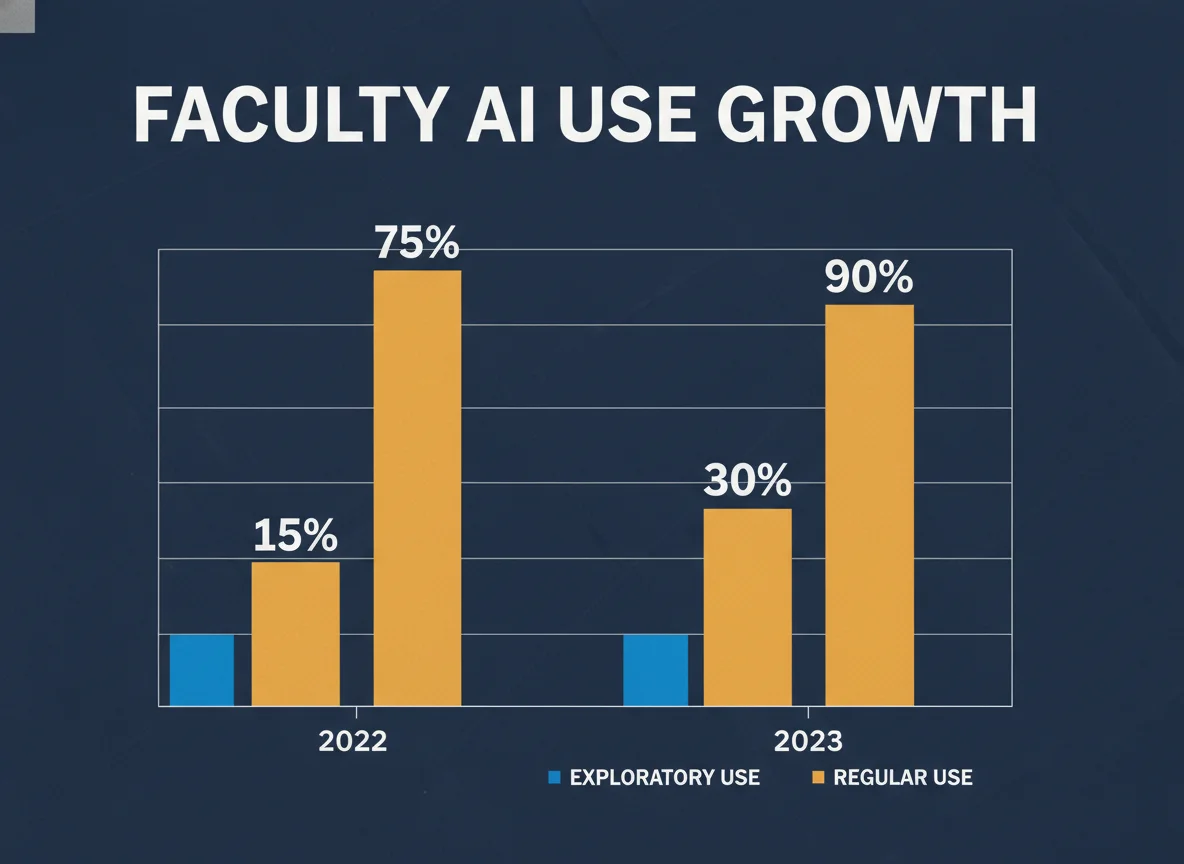

From experiment to habit: how often professors actually use AI

The seven-fold jump in two and a half years matters because it lands during the same period universities are tightening student AI policies. Detection Drama’s 2026 industry report tracked detection-tool spending growing in lockstep, and our analysis of academic-misconduct outcomes showed an asymmetry that students notice immediately: faculty AI use is professionally normalized while student AI use can still trigger academic-integrity referral.

How much universities are paying to put ChatGPT in faculty hands

| Institution | Vendor | Contract value | Coverage | Year |

|---|---|---|---|---|

| California State University (22 campuses) | OpenAI ChatGPT Edu | $17M | ~460,000 students + ~63,000 faculty/staff | Jan 2025 |

| University of Southern California (USC) | OpenAI ChatGPT Edu | $3.1M / year | Faculty + staff + students, all schools | Jan 2026 launch |

| University of South Carolina | OpenAI training contract | $1.5M | Responsible-use training for faculty/students | Jun 2025 |

| Arizona State University (early adopter) | OpenAI partnership | Undisclosed | Faculty + research + classroom pilots | 2024 |

Cal State’s 2025 deal — signed during a budget squeeze and announced at a Board of Trustees meeting before broad faculty consultation — remains the single largest publicly disclosed university-OpenAI contract in the United States.

Two structural points stand out in the spend data. First, USC’s $3.1M went through during a fiscal year with a reported ~$250M deficit, and student journalists at the Daily Trojan have pressed the provost on whether the university consulted faculty before committing. Second, Cal State’s deal effectively gave 460,000 students and their professors equal access to the same tier of ChatGPT Edu — which makes any subsequent classroom rule that bans student AI use harder to defend. The same problem is visible in our Detection Drama analysis of universities that banned AI detectors while leaving institutional AI access intact for staff.

What professors actually use ChatGPT for

| Use case | % of AI-using teachers | Risk profile |

|---|---|---|

| Lesson planning & slide prep | ~35% | Low — visible to students; quality issues are public |

| Drafting recommendation letters | significant share (NPR/Tyton) | Medium — ethical concerns, FERPA-adjacent |

| Writing student emails / feedback | significant share | Medium — students can detect tone shift |

| Grading low-stakes assignments | 13% | Medium — bias documented |

| Grading high-stakes assignments | 3% | High — legal/equity risk; banned at many schools |

| Teaching with AI (in-class activity) | 29% | Low — explicit, transparent |

| Running AI to test what students might generate | 43% | Low — defensive use |

The fraction of AI-using teachers who admit to using ChatGPT on high-stakes grading. Critics argue the real number is higher because most accountability frameworks rely on self-report.

The most volatile category is “drafting recommendation letters” and other student-facing correspondence. When students discover that their letter of recommendation was AI-generated, the perceived breach of trust is high — and the work product is exactly what tuition is supposed to fund. Detection Drama’s research into the AI humanizer industry consistently surfaces faculty among the heaviest users of humanizer tools, often to camouflage routine administrative writing rather than research output. That same pattern shows up across our coverage of AI-detection anxiety, where students describe a double standard they feel powerless to challenge.

The hypocrisy problem: when students catch professors using ChatGPT

| Case | Trigger | Outcome |

|---|---|---|

| Northeastern University (Ella Stapleton, 2025) | Visible ChatGPT prompt text inside lecture notes; AI-generated images with extraneous body parts; misspellings | Formal complaint, $8,000 tuition refund demand, national press; professor apologized |

| Texas A&M (2023, foundational case) | Instructor ran final papers through ChatGPT and asked the bot if it had written them | Class temporarily failed; rescinded after public backlash; case cited in subsequent AI-detection lawsuits |

| Multiple Reddit r/Professors threads (2025-26) | Faculty publicly admit using ChatGPT for letters of rec, syllabi, exam questions while enforcing student AI bans | Posts cross 500-1,000 upvotes; widely shared by student advocacy outlets |

The exact tuition refund Stapleton demanded for one course. The case is now used by student-advocacy groups as a template for similar complaints, particularly at institutions with strict AI-misconduct policies.

What makes the hypocrisy framing stick is that it intersects with three policies that already disadvantage students: Turnitin AI score thresholds applied without context, documented ESL bias in detector outputs, and the legal exposure faculty have been quietly insulated from. When a professor’s ChatGPT use produces a poorly drafted recommendation, no integrity board will be convened. When a student turns the same tool on a paper, the consequences can end a degree.

How faculty themselves feel about ChatGPT

| Sentiment | % of faculty | Source |

|---|---|---|

| GenAI will increase student overreliance on AI | 95% | Elon / AAC&U Jan 2026 |

| GenAI will diminish student critical thinking | 90% | Elon / AAC&U |

| GenAI will significantly change the work of teaching | 86% | Elon / AAC&U |

| AI has had a NEGATIVE effect on my teaching | 52% | Cal State 2026 (n=94K) |

| Insufficiently trained to use AI effectively | ~75% | Inside Higher Ed / Digital Education Council |

| Skeptical AI is benefiting education overall | 59% | Cal State 2026 |

The takeaway is dissonant: faculty are using AI more than ever, paying real institutional money for access, and simultaneously reporting that they think the tool is harming their teaching. That’s the kind of contradiction that historically resolves through institutional policy — and the policies are still being written. Detection Drama’s tracking of AI detection in K-12 schools shows the same pattern at the secondary level, with administrators adopting AI faster than teachers and student-impact data lagging by 12-18 months.

Calculator: estimate your university’s potential ChatGPT spend

Per-seat ChatGPT Edu pricing has not been published, but Cal State’s $17M / ~520K seats works out to ~$33/seat/year. USC’s $3.1M / ~70K seats works out to ~$44/seat. Use $35-50 per seat as a rough planning band.

$900,000

Frequently asked questions

What percentage of professors use ChatGPT or other AI tools?

How are professors actually using ChatGPT?

Is it hypocritical for professors to use ChatGPT while banning students from doing the same?

How much do universities spend on giving professors ChatGPT access?

Are professors allowed to use AI to grade student work?

Do students know when their professor is using ChatGPT?

Sources & references

- Digital Education Council, Global AI Faculty Survey, 2025.

- Tyton Partners, higher-education staff survey (n=1,800+), reported by NPR: Research, curriculum and grading: New data sheds light on how professors are using AI, Oct 2, 2025.

- Elon University / AAC&U, The AI Challenge: How College Faculty Members Assess the Present and Future, Jan 21, 2026 (n=1,057, fielded Oct 29-Nov 26, 2025).

- California State University Systemwide AI Survey, 2026 (n=94,000+), via CalMatters and Inside Higher Ed.

- CalMatters, Cal State struck a deal with OpenAI. Some students and faculty refuse to use it, May 2026.

- LAist, Inside Cal State’s big $17 million bet on ChatGPT for all.

- Daily Trojan, Professors redesigned courses after ChatGPT’s launch. USC endorsed it., April 2026.

- Morning Trojan, USC’s ChatGPT EDU subscription cost $3.1 million.

- SC Daily Gazette, USC signs $1.5M contract with OpenAI, June 2025.

- New York Times (May 2025) and Newsweek follow-up coverage of the Ella Stapleton / Northeastern University ChatGPT case.

- NBC News and The Hill, coverage of the 2023 Texas A&M ChatGPT-grading incident.

- Reddit r/college, r/Professors, r/GradSchool — primary thread mining (Detection Drama, May 2026).