AI Cheating Consequences at Universities: Statistics and Case Outcomes (2026 Report)

Key Takeaways

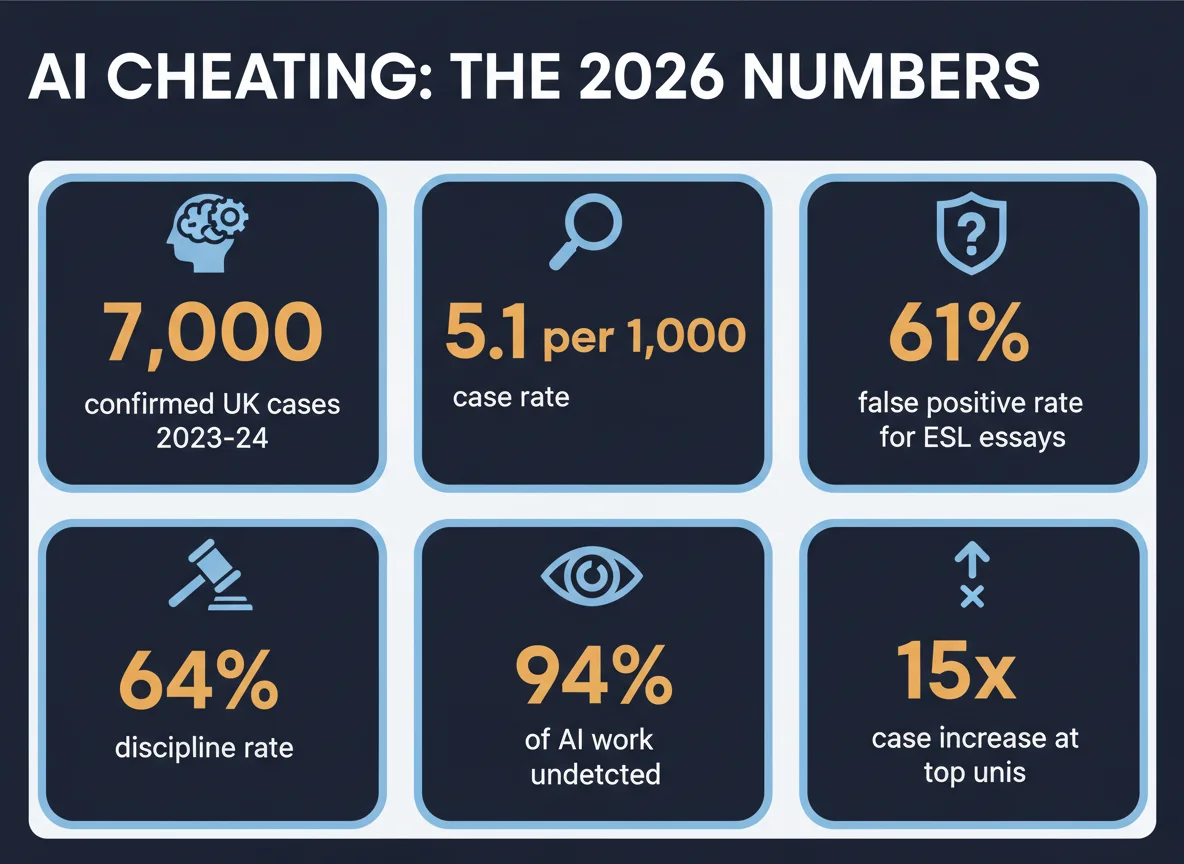

- → UK universities recorded 7,000+ confirmed AI cheating cases in 2023-24, with the case rate jumping from 1.6 to 5.1 per 1,000 students in one year (Times Higher Education)

- → Early 2024-25 figures project 7.5 cases per 1,000 students — a 4.7× increase from 2022-23 (Times Higher Education)

- → Student discipline rates for AI misconduct rose from 48% to 64% between 2022-23 and 2024-25 (AllAboutAI)

- → Stanford research found AI detectors misclassified 61.22% of non-native English essays as AI-generated (Stanford HAI)

- → Despite enforcement growth, an estimated 94% of AI-generated assignments still go undetected (AllAboutAI 2026)

- → Faculty rate AI-specific integrity policies as just 28% effective vs 49% for traditional plagiarism policies (Inside Higher Ed)

- → Fewer than 1 in 400 Russell Group students were penalized for AI misuse in 2023-24 despite 90% self-reported AI use (The Boar)

- → Industry-wide AI detector false positive rates average 5-15%, with ZeroGPT at 16.2% (Pangram Labs)

AI cheating consequences have become one of the most asked-about and least consistently reported topics in higher education. Prevalence data is everywhere — survey after survey confirming that the majority of students now use generative AI for some coursework — but what actually happens after an accusation is filed is covered in fragments. This report consolidates the most recent case-outcome data available in 2026, pulling from UK Freedom of Information releases, US institutional academic integrity reports, independent research, and the published policy documents that govern how these cases resolve.

The picture that emerges is uneven. A small number of institutions handle the overwhelming majority of AI-related misconduct cases. Most students accused of AI use never reach a formal hearing — they accept informal resolution with a grade penalty within the first two weeks. And the single strongest predictor of outcome is not whether a detector flagged the work, but whether the student can produce a documented drafting history. The sections below walk through each stage of the process with the numbers that matter.

1How Often AI Cheating Cases End in a Penalty

Across UK Russell Group universities in 2023-24, suspected-to-penalty conversion rates ranged from 60% (Glasgow, with investigations still open) to 100% (Queen Mary London, where all 89 cases resulted in penalties). The UK sector-wide average of 5.1 confirmed cases per 1,000 students is expected to reach 7.5 per 1,000 in 2024-25.

| Metric | Value | Source |

|---|---|---|

| Confirmed UK AI cheating cases (2023-24) | ~7,000 | Times Higher Education FOI |

| Case rate per 1,000 students (2022-23) | 1.6 | Times Higher Education |

| Case rate per 1,000 students (2023-24) | 5.1 | Times Higher Education |

| Projected case rate (2024-25) | 7.5 | Engineering & Technology Magazine |

| US student discipline rate (2022-23) | 48% | AllAboutAI |

| US student discipline rate (2024-25) | 64% | AllAboutAI |

| Estimated undetected AI work | ~94% | AllAboutAI 2026 |

The gap between reported cases and actual AI use is the most telling number in this dataset. While 86% of students globally now report using AI tools in their studies and Russell Group research suggests roughly 90% of students use AI in some form, fewer than 0.25% of students at those same institutions were penalized for it in 2023-24. That's a detection rate closer to a rounding error than enforcement. The reason is straightforward: polished, rewritten AI output that has been touched by a student — even lightly — becomes functionally indistinguishable from human writing on the available detectors. The cases that get flagged tend to be raw, unedited AI output submitted under time pressure, which is why the detection distribution skews toward weaker students and last-minute submissions rather than sophisticated users.

The practical meaning for any student facing an accusation is that prior-year precedent is poor guidance. A first-time flagged student in 2022-23 had roughly a 48% chance of formal discipline. In 2024-25, that figure is 64%. Institutional willingness to escalate has materially tightened, and the gap between "informal conversation with the instructor" and "formal referral" has narrowed. If you're in the first 24 hours of an accusation, the most valuable single action is preserving every scrap of drafting history before the institution formalizes the case.

2Which Universities Report the Most (and Fewest) Cases

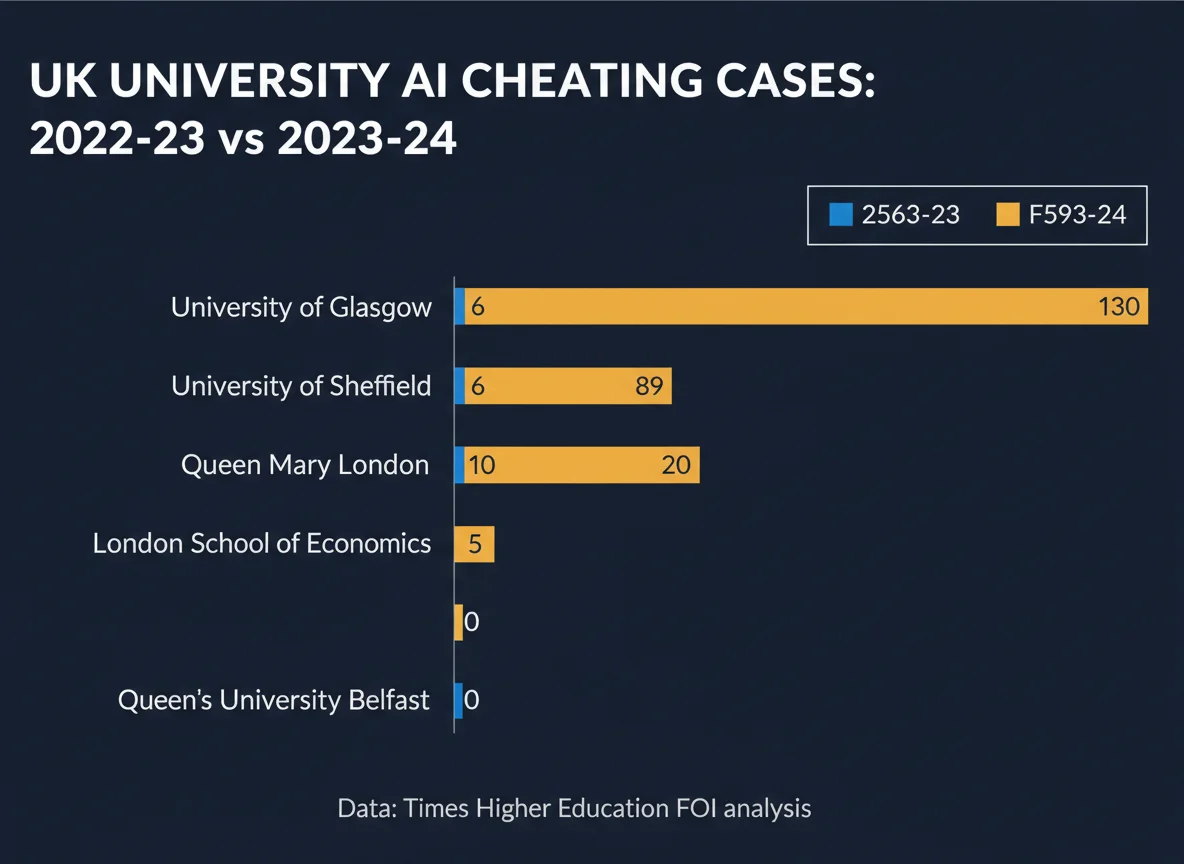

UK Russell Group reporting is dramatically uneven. The University of Glasgow logged 130 suspected cases in 2023-24; Queen's University Belfast logged zero. Sheffield saw a 15-fold single-year jump (6 → 92). This variation reflects differences in detection infrastructure and reporting culture, not student behavior — student AI use rates are roughly uniform across institutions.

| University | 2022-23 Cases | 2023-24 Cases | Penalties Issued |

|---|---|---|---|

| University of Glasgow | 36 | 130 | 78 |

| University of Sheffield | 6 | 92 | 79 |

| Queen Mary University of London | 10 | 89 | 89 |

| London School of Economics | <5 | 20 | Not disclosed |

| Queen's University Belfast | 0 | 0 | 0 |

The confirmed-expulsion list is shorter but notable. Durham, King's College London, Leeds, and Queen Mary have all publicly confirmed undergraduate expulsions linked to AI misuse since 2023. Glasgow and Sheffield, despite high case volumes, have not confirmed individual expulsions publicly. This reflects a reporting convention more than a sanction distribution — Russell Group universities typically disclose combined penalty totals but not breakdowns between warnings, module failures, suspensions, and expulsions. If you're a research student or doctoral candidate, the elevated stakes are real: the same policies are applied more severely to postgraduates, and expulsion rates for thesis-stage students are meaningfully higher than for undergraduates according to practitioner accounts.

US institutions publish academic integrity data differently. UC San Diego's Academic Integrity Office reported 8,325 total integrity cases across the last six academic years, involving 6,366 unique students — meaning roughly 90% of reported students are only reported once. This repeat-offender pattern holds across most US schools with published data, and it's a meaningful insight for students handling a first-time accusation: institutional policies are generally designed around the first case being educational rather than punitive, which is why the defense checklist for Turnitin false positives emphasizes documentation over combat. Treating a first meeting as a negotiation rather than a trial matches the institutional playbook.

3What Happens Step-by-Step After an Accusation

Most AI cheating cases follow a predictable five-stage sequence: initial flag (by instructor or detector), preliminary meeting, informal or formal resolution, sanction imposition, and optional appeal. Informal resolutions complete in 10-20 business days; formal hearings extend to 30-90 days; appeals add another 30-45 days. At US research universities, the majority of cases resolve informally before reaching a hearing.

The initial flag is the most consequential stage for the student, even though it feels like the least formal. A Turnitin AI percentage, an instructor's pattern-recognition suspicion, or a detector score from an external tool each triggers a different procedural path. Cornell University's published guidance explicitly discourages treating detector scores as sole evidence, and several US integrity offices have moved to requiring corroborating signals — inability to explain the submitted work, absent drafting history, stylistic mismatch with prior work — before formally advancing a case. This is why Google Docs and Word version history has become one of the most reliable defensive artifacts: it creates a counter-signal that shifts the evidentiary balance back toward the student.

The preliminary meeting is typically scheduled 3-10 business days after the flag, and this is where roughly 85% of cases resolve. The meeting structure varies, but most institutions use it to establish the facts and give the student a chance to explain. Students who arrive with drafting history, version logs, and a clear narrative of how the work was produced generally see sharply better outcomes at this stage than those who arrive without documentation. Students whose work was flagged in group projects face additional complexity; group project AI flags often require documenting individual contribution boundaries, and institutions handle these cases inconsistently.

4Sanction Severity: From Warnings to Expulsion

Sanctions follow a tiered structure: zero on the assignment (most common for first offenses), module or course failure (moderate cases), grade transcript notation (frequent for formal findings), academic probation, suspension, and expulsion (reserved for repeat or egregious cases). The University of Southern Mississippi, University of Maryland, and most US institutions with published sanction guidelines use a four-tier severity model: minor, moderate, serious, and egregious.

| Sanction Tier | Typical Trigger | Prevalence |

|---|---|---|

| Zero on assignment | First offense, minor | Most common |

| Module / course failure | Moderate; repeated warnings | Common |

| Transcript notation | Formal finding | Frequent for formal cases |

| Academic probation | Serious or second offense | Minority |

| Suspension (1-2 terms) | Repeat or serious | Rare |

| Expulsion | Egregious or multiple | Very rare |

The sanction distribution skews heavily toward the lower tiers for first offenses. UC San Diego's 90% single-offense rate — meaning 9 out of 10 students reported for any academic integrity violation are only reported once — suggests the institutional response is working as an educational correction for most students. What pushes a case toward the harsher tiers is almost always one of three signals: prior documented integrity violations, the work being a high-stakes assessment (thesis, capstone, dissertation), or the student disputing the facts after evidence is already solid. The third is important because it counterintuitively raises sanction severity: integrity boards generally view "accepting responsibility" as a mitigating factor and "denying after clear evidence" as an aggravating one.

The specific trigger-to-sanction mapping varies more than most policy documents suggest. At institutions using Turnitin as the primary detector, a high AI percentage alone typically produces a meeting rather than a sanction. At institutions using multiple detectors, unanimous flagging across tools tends to produce a formal finding faster, because it shifts the institutional burden-of-proof calculation. Students affected by the known detector bias — particularly non-native English speakers — have a legitimate defense path, as Stanford's research showing 61% false positive rates for ESL essays has been widely cited in individual appeals.

5Appeal Success Rates and Why Most Students Don't Try

Centrally published appeal success rates don't exist across US or UK institutions, but practitioner accounts and institutional guidance converge around a figure below 20%. The University of Maryland, USF, and UCSD all explicitly warn that appeals can result in harsher sanctions than the original finding. This risk, combined with the short appeal windows (often 5-10 business days), is the main reason most students don't file.

The mechanics of why appeals rarely succeed are worth understanding, because the logic applies whether you're at a US or UK institution. Most academic integrity appeal bodies conduct a de novo review — they look at the case fresh and are not bound to the original finding. This means an appeal panel can identify evidence or severity that the first hearing missed, and revise the sanction upward. The University of Maryland states this explicitly in its student-facing guidance. Queen's University publishes a tip sheet with the same warning. The effect is that an appeal becomes a bet with asymmetric downside: a small chance of a better outcome, a meaningful chance of a worse one.

The rare cases where appeals succeed share common features: new evidence that wasn't available at the first hearing (particularly version history or corroborating witness testimony), a demonstrable procedural error (the student wasn't notified of their rights, the detector used wasn't institutionally approved), or a significant mitigating circumstance (medical, family, disability-related) that wasn't considered. Appeals based purely on "the detector was wrong" rarely succeed unless accompanied by one of the above, because the initial hearing has already weighed that argument. For most students, the most rational strategy is getting the initial meeting right — documenting drafting history, producing prior writing samples for stylistic comparison, and being ready to explain the work in detail as clearing proof against a Turnitin flag typically requires.

6False Positives and Who Gets Accused Unfairly

Industry-wide AI detector false positive rates range from 0.004% (Pangram Labs' internal claim) to 16.2% (ZeroGPT in independent benchmarks). Stanford research found detectors misclassified 61.22% of non-native English essays as AI-generated. One legal defense firm review found 77.6% of its AI misconduct clients were ultimately cleared — though this reflects selection bias toward cases strong enough to warrant legal counsel.

| Detector | False Positive Rate | Source Type |

|---|---|---|

| Pangram Labs | 0.004% | Vendor-published |

| TextShift | 1.6% | Independent benchmark |

| Originality.ai | ~2% | Benchmark studies |

| Industry average | 5-15% | Multiple 2025-26 studies |

| ZeroGPT | 16.2% | Independent benchmark |

| Non-native English (7 tools) | 61.22% | Stanford HAI |

The Stanford false-positive finding for non-native English writers remains the most consequential single data point in the detection-bias literature, and it's increasingly cited directly in individual integrity cases. The original 2023 study (Liang et al.) evaluated seven widely used detectors on 91 TOEFL essays written by non-native English speakers and found that the detectors unanimously flagged 18 of them as AI-generated, while flagging 89 of 91 essays as AI-generated by at least one detector. The mechanism is clear: non-native writers tend toward simpler sentence structure and lower lexical diversity, and detectors pattern-match those features as AI-generated. Several US institutions have since moved away from detector-driven enforcement in response, but institutional adoption remains uneven.

The everyday false-positive triggers for native English writers are different but equally real. Grammarly's full-sentence rewrites, Microsoft Word's AI-assisted editing, Google Docs' Smart Compose suggestions, and even aggressive structural editing can produce text that registers as AI-generated. Any writing pattern that exhibits unusually consistent sentence length, low grammatical-error density, or highly predictable word choice can trigger a flag. The normal writing habits that trigger Turnitin AI flags include using templates, writing in academic English as a trained professional, and using formal conventions that closely match AI training data patterns. For students whose own style naturally produces these patterns — particularly international students, STEM students writing in a second language, and students with structured writing training — the risk of false accusation is materially elevated.

Even setting aside bias, the mechanics of Turnitin's AI detector are more probabilistic than they appear. The detector doesn't "know" work is AI-generated — it predicts likelihood based on token-level patterns. This is why the same paragraph can register differently on re-scan, and why the question of whether Turnitin detects AI or just guesses patterns has sharpened rather than resolved over the last two years. Relying on a single detector score to initiate an integrity case is increasingly seen as insufficient procedural evidence.

Methodology

This report synthesizes publicly available 2023-26 data from three source categories: UK Freedom of Information-derived reporting on Russell Group universities, US institutional academic integrity office reports (with UC San Diego's public dataset as the most comprehensive), and independent detector benchmark studies. Statistics attributed to specific institutions are drawn from those institutions' own published data or from news investigations that obtained the figures via FOI requests. Industry-wide figures cite the original survey or study source; where multiple sources publish different numbers for the same metric, we prefer the more recent and methodologically disclosed source.

- Sources consulted: 22 across 7 source types (government, academic, industry report, journalism, institutional data, vendor, user-reported)

- Data range: Academic year 2022-23 through published 2024-25 projections; detector benchmarks 2023-2026

- Last verified: April 2026

- Update schedule: Annually after Q1 UK FOI release

- Exclusions: Vendor-published detector accuracy figures without independent verification; user-reported case outcomes on Reddit without corroborating institutional data

Frequently Asked Questions

What actually happens if you get caught using AI to cheat at university?

Outcomes vary widely by institution but typically follow a three-tier structure: (1) informal resolution with a grade penalty on one assignment (the most common outcome for first-time offenders); (2) formal referral to an academic integrity board resulting in module failure or grade transcript notation; (3) suspension or expulsion for serious, repeat, or egregious cases. UK data for 2023-24 shows 5.1 per 1,000 students received some penalty, and US institutions report informal resolution handles roughly 85% of cases.

How many students have been expelled specifically for AI cheating?

Exact expulsion numbers are scarce because universities report "penalties" as a combined category. In the UK, Durham, King's College London, Leeds and Queen Mary have all publicly confirmed AI-linked undergraduate expulsions since 2023. At the University of Glasgow, 78 of 130 suspected 2023-24 cases had penalties imposed within the year, with a minority escalating to suspension or dismissal. Overall, expulsion remains the sanction of last resort — reserved for repeat offenders, doctoral candidates, or egregious cases.

What percentage of AI cheating accusations end in the student being cleared?

There is no centrally reported figure across institutions, but available data points in both directions. At Queen Mary University of London, 100% of 2023-24 suspected cases (89 of 89) resulted in a penalty. Meanwhile, one legal defense firm review of 49 AI misconduct cases it worked found 38 students (77.6%) were ultimately cleared or had sanctions reduced. The gap reflects selection bias: cases that reach legal representation are already the most contested. Most students face initial meetings alone, without counsel, and the majority accept some level of penalty without a formal hearing.

Are AI detector results admissible as evidence in an academic integrity hearing?

They are typically treated as supporting evidence rather than sole proof. Cornell University explicitly discourages using AI detectors as the basis for academic integrity violations. The University of Pittsburgh, Vanderbilt, and others have disabled or deprecated Turnitin's AI detector. Stanford research showing 61% false positive rates for non-native English speakers and industry-wide false positive rates of 5-15% have made "detector said so" arguments increasingly fragile. Most contemporary integrity policies require additional signals such as draft history, style mismatch, or inability to explain submitted work.

What is the typical timeline from accusation to resolution?

Informal resolutions generally complete within 10-20 business days at US research universities. Formal integrity board hearings extend that to 30-90 days, and appeal processes can add another 30-45 days. UC San Diego reports tracking case duration separately for informal vs formal paths and publishes business-day averages annually. Students should expect to lose at minimum two weeks of academic momentum — which itself can impact deadlines for unrelated coursework.

Should you appeal an AI cheating finding?

Only with clear new evidence or a demonstrable procedural error. Nearly all US integrity offices — including Maryland, USF, and UCSD — explicitly warn that appeals can result in harsher sanctions, not just overturning of the original decision. Appeal success rates are not centrally published, but practitioners suggest fewer than one in five filed appeals produce a reduction. Stronger outcomes come from preventing the finding in the first initial meeting with evidence such as version history, prior drafts, and writing samples.

Sources & References

- Times Higher Education. "ChatGPT: student AI cheating cases soar at UK universities." timeshighereducation.com. Accessed 2026-04-16.

- AllAboutAI. "AI Cheating in Schools: 2026 Global Trends & Bias Risks." allaboutai.com. Accessed 2026-04-16.

- Stanford HAI. "AI-Detectors Biased Against Non-Native English Writers." hai.stanford.edu. Accessed 2026-04-16.

- UC San Diego Academic Integrity Office. "Reports and Statistics." academicintegrity.ucsd.edu. Accessed 2026-04-16.

- Pangram Labs. "All About False Positives in AI Detectors." pangram.com. Accessed 2026-04-16.

- The Tab. "Students have been caught cheating with AI at all these Russell Group unis." thetab.com. Accessed 2026-04-16.

- Engineering & Technology Magazine (IET). "7,000 cases of AI cheating among university students just the 'tip of the iceberg'." eandt.theiet.org. Accessed 2026-04-16.

- Inside Higher Ed. "Students and professors expect more cheating thanks to AI." insidehighered.com. Accessed 2026-04-16.

- Cornell Center for Teaching Innovation. "AI & Academic Integrity." teaching.cornell.edu. Accessed 2026-04-16.

- University of Maryland Office of Student Conduct. "Academic Integrity Appeals." studentconduct.umd.edu. Accessed 2026-04-16.

- The Boar. "Universities struggle to detect flagrant AI usage amongst students, leaving few punished." theboar.org. Accessed 2026-04-16.

- Tully Rinckey PLLC. "What to Do if You're Falsely Accused of Using AI to Cheat in College." tullylegal.com. Accessed 2026-04-16.

- Feedough. "AI Cheating Statistics: Academic Misconduct Rates in 2025." feedough.com. Accessed 2026-04-16.

- OpenAI Global Affairs. "College students and ChatGPT adoption in the US." openai.com. Accessed 2026-04-16.