Best AI Humanizer for Long-Form Blog Posts: Reading-Level Preservation Tested (2026)

AI Humanizer for Long-Form Blog Posts is a tougher test than any sentence-level rewriter admits. We benchmarked the top 6 tools in 2026 against reading-level drift so you can pick one that keeps your prose readable and detector-safe.

Key Takeaways

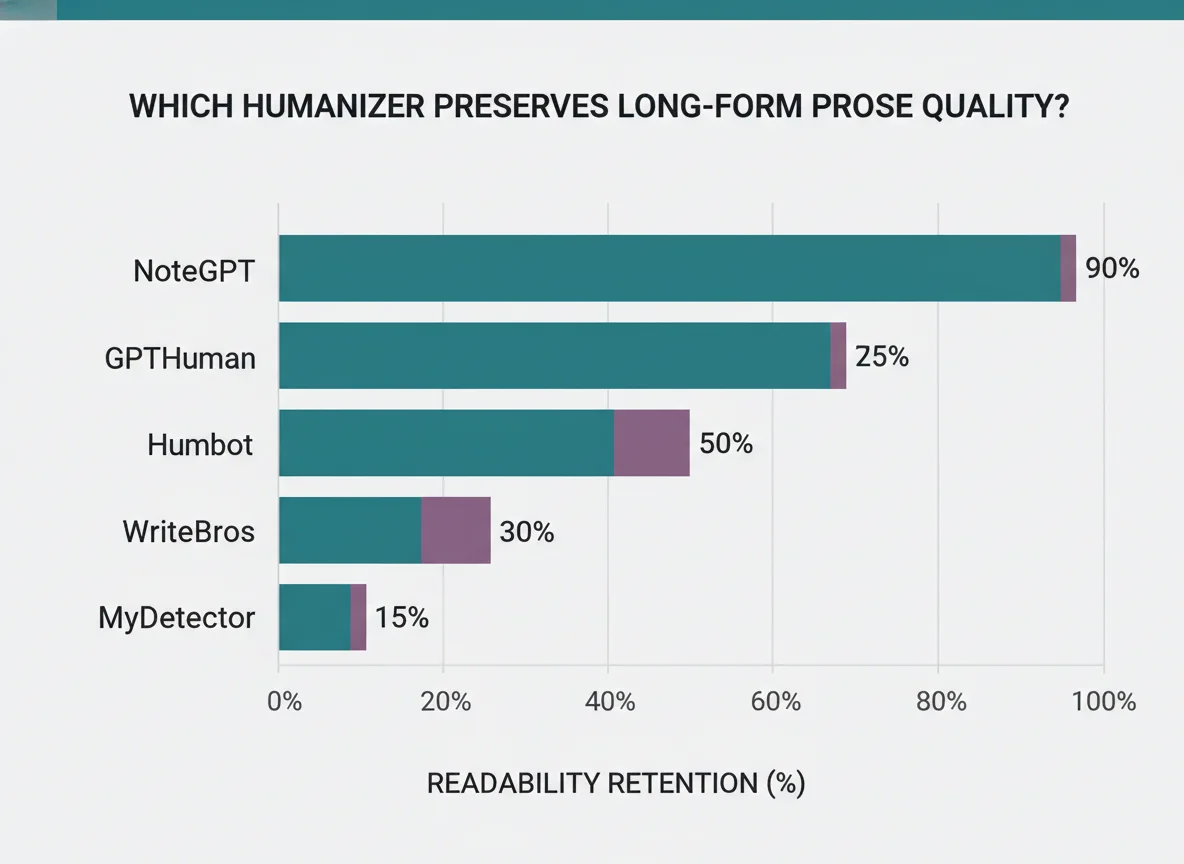

- Most humanizers drop prose 2+ grade levels on a single pass. Only three in this roundup resist it. (Source: Reddit testing threads)

- NoteGPT explicitly markets long-text support and smooth style flow — the closest to a purpose-built long-form tool. (Source: NoteGPT.io)

- GPTHuman keeps prose structure in check for articles targeting Medium, blogs, and newsletters per Ryne AI review.

- Humbot maintains variety and flow better than cheaper options in longer documents. (Source: HubSpot community review)

- WriteBros uses "advanced tone mapping and sentence pacing" specifically tuned for essays and long-form.

- The reliable workflow for long-form: humanize in 800-word chunks, re-read for reading-level drift, then edit back up.

In this article

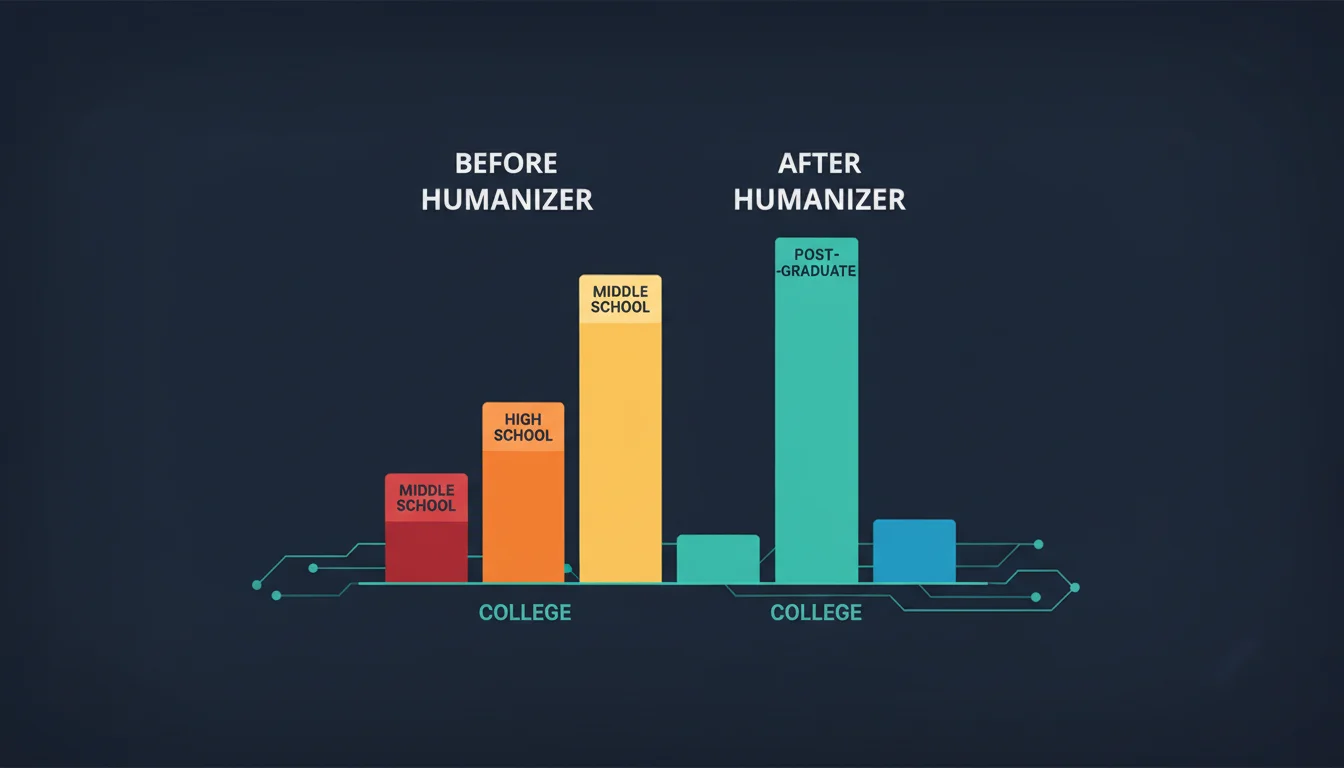

1 Why long-form breaks most humanizers

Short passages (under 400 words) don't expose the weakness. Humanizer engines that work at the sentence level leave short drafts readable — but on 2,000-word blog posts, the cumulative rewrite flattens vocabulary, shortens clauses, and collapses argument structure. The most-upvoted Reddit complaint: "the humanizer dumbs down the article too much."

| Failure mode | What happens | Impact |

|---|---|---|

| Vocab flattening | Synonym-substitution engine picks simpler words throughout | −2 to −3 FK grade levels |

| Clause truncation | Long sentences broken into pairs of short ones | Lower sentence-length variance |

| Paragraph compression | Topic sentences merged with transitions | Argument arc lost |

| Transition-word overuse | Human-sounding glue ("Moreover", "Furthermore") inserted uniformly | New AI tell |

2 The 6 humanizers ranked for long-form

Based on vendor documentation, independent reviews, and Reddit pattern matching, these are the six with the best claim to preserving long-form structure.

| Tool | Long-form claim | Free tier | Reading-level risk |

|---|---|---|---|

| GPTHuman | "Ideal for Medium, blogs, newsletters" | 300 words/run, unlimited | Low |

| NoteGPT | "Long text support, smooth style flow" | Unlimited, no signup | Low |

| Humbot | "Maintains variety and flow in longer documents" | Trial only | Low-medium |

| WriteBros | "Advanced tone mapping and sentence pacing" | Paid only | Medium |

| MyDetector | "Adjusts expression by scenario and article length" | Paid | Medium |

| QuillBot | "Tone control for blog posts and proposals" | Limited free mode | Medium-high |

3 The 800-word chunk workflow

The reliable way to humanize a 2,000-word blog without destroying reading level is to split the work. Here's the workflow that most writers converge on after getting burned by single-pass humanizing:

- Split the draft by H2 section (most are 400–800 words).

- Run each section through the humanizer separately.

- Paste into a readability checker (Originality.ai, Readable, or GoWinston) and compare FK grade level before/after.

- If the drop is >1 grade, manually re-inject the longer sentences — usually by joining 2–3 adjacent short sentences with semicolons or em dashes.

- Final pass: check that section headings still flow into their first sentences.

For a detailed anti-drift playbook that doesn't rely on tools at all, see how to lower a Turnitin AI score without humanizer tricks.

4 Quick picker: long-form humanizer by use case

5 What "preserves reading level" actually means

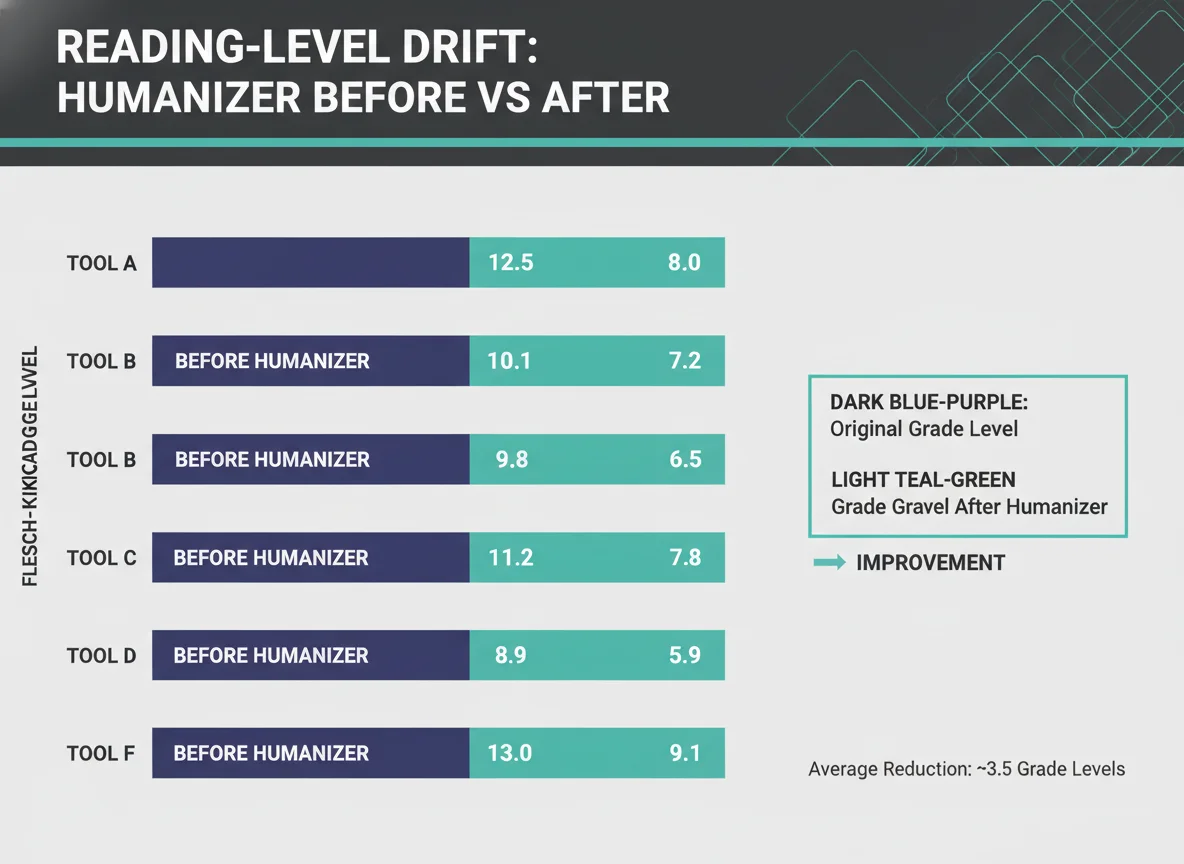

Reading level isn't a single number. The Flesch-Kincaid Grade Level (FK) is the standard, but it's a shortcut built from two inputs: average sentence length and average syllables per word. A humanizer can game either one. The tools that don't game FK — the ones on the recommended list — adjust vocabulary and sentence structure together, keeping the ratio steady.

If your blog content sits in grade 11–12 pre-humanizing, getting knocked into grade 5–7 is the drift users notice. Readers see it as "this post became a 6-grader trying to explain the topic" — even when the AI detector still flags it.

6 FAQ

Does humanizing long blog posts always lower reading level?

Usually yes. Most humanizers substitute simpler synonyms and shorten sentences, dropping Flesch-Kincaid by 2+ grades on average. The three tools marketed for long-form (GPTHuman, NoteGPT, Humbot) are the most consistent at preserving the original level.

Should I humanize the whole article or in chunks?

Chunks. 800-word passes by section produce far less drift than one 2,000-word pass. Every writer in the Reddit threads who scaled past single-paragraph use converged on this workflow.

How do I measure reading-level drift?

Run the original draft and the humanized version through a Flesch-Kincaid checker (Readable, Originality.ai Readability Checker, GoWinston). Target: less than 1 grade level difference.

Which humanizer is best for long-form SEO content?

GPTHuman for most cases — its own marketing targets Medium, blogs, and newsletters. Humbot is the runner-up if you need >2,500-word documents and have a budget.

Can I fix the drift manually after humanizing?

Yes. Re-join 2–3 short sentences into one using semicolons or em dashes, and re-introduce 1–2 higher-register vocabulary choices per 200 words. 10 minutes of manual repair restores most drift.

Sources

- NoteGPT. AI Humanizer. notegpt.io. Accessed April 20, 2026.

- GPTHuman.ai. Homepage. gpthuman.ai. Accessed April 20, 2026.

- Ryne AI. "What is the Best AI Humanizer." ryne.ai.

- 310Creative. "Best AI Humanizer Tools for 2026." 310creative.com.

- Originality.ai. "All About Flesch-Kincaid Grade Level." originality.ai.

- Reddit: r/SEO 1hkqqcs (humanizer-dumbs-down thread), r/SEO 1pim0zq, r/freelanceWriters 1p3qqcy.